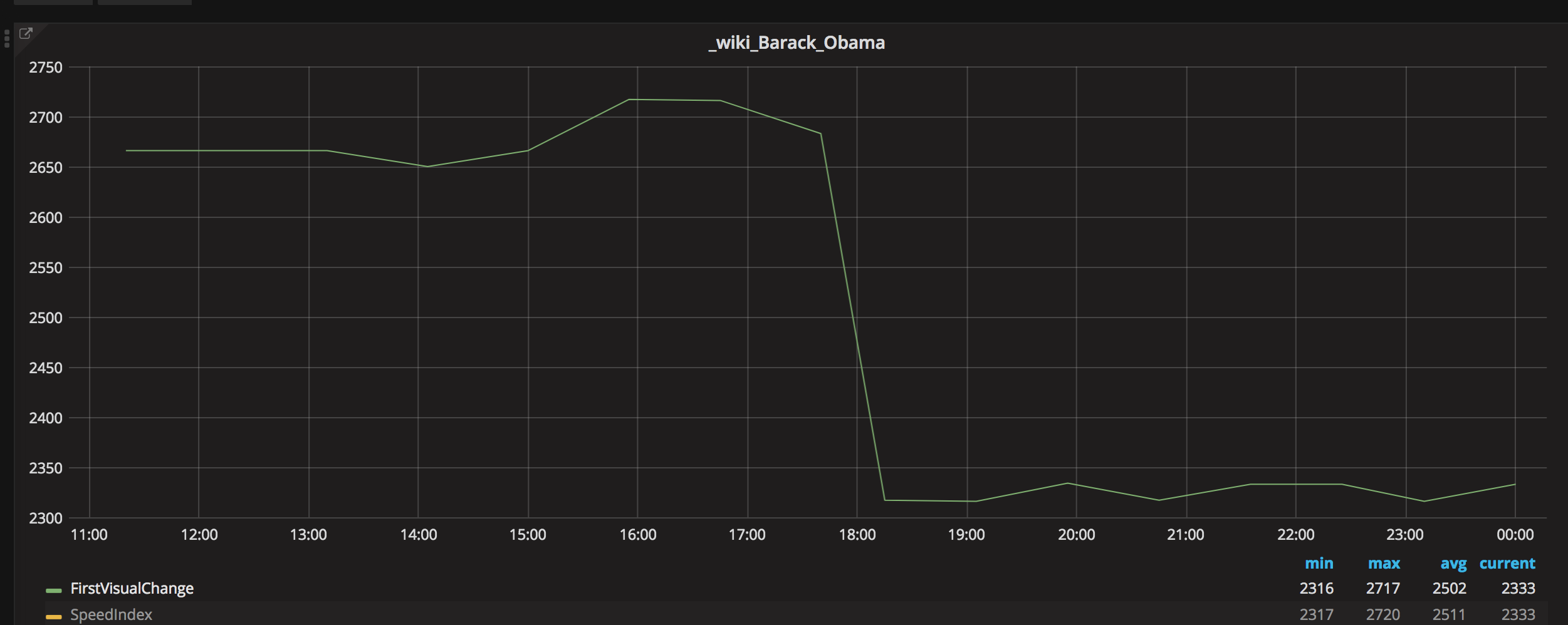

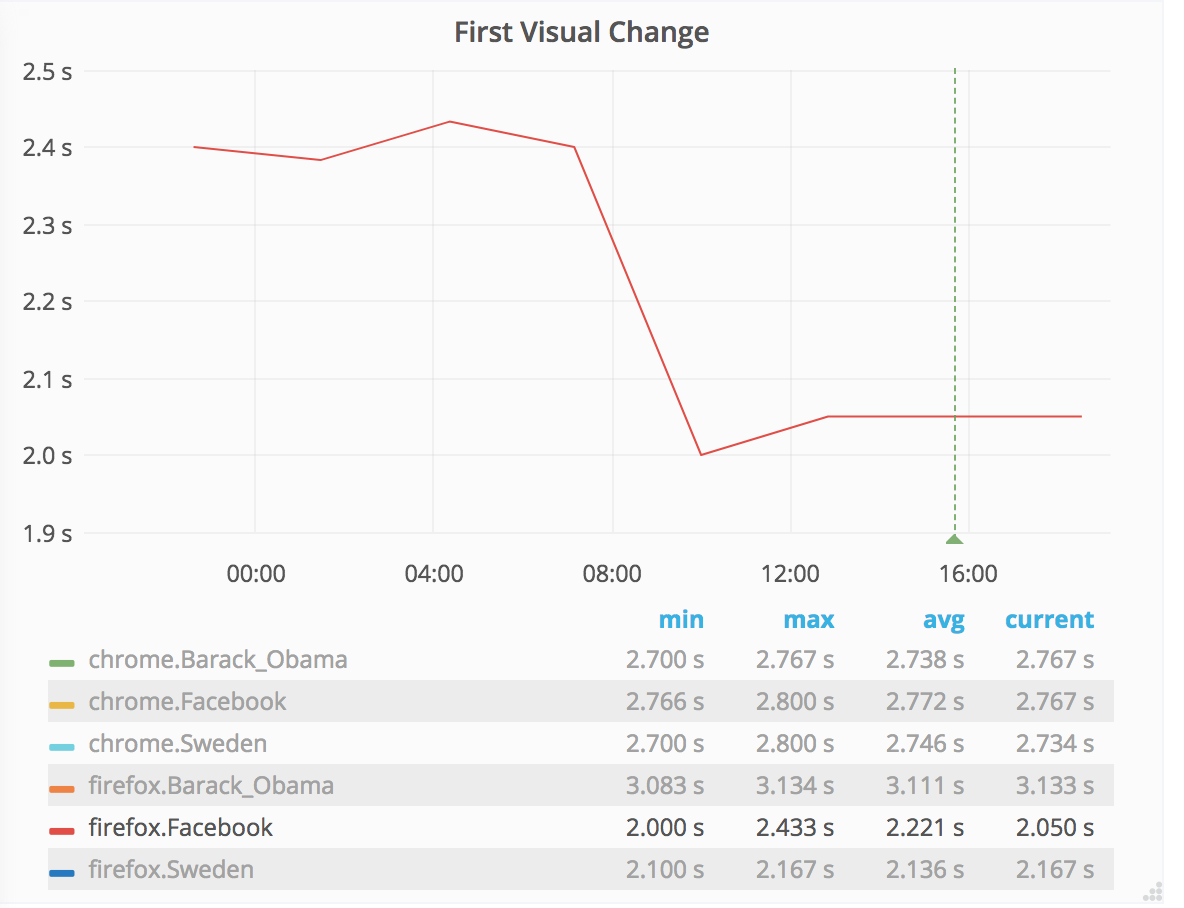

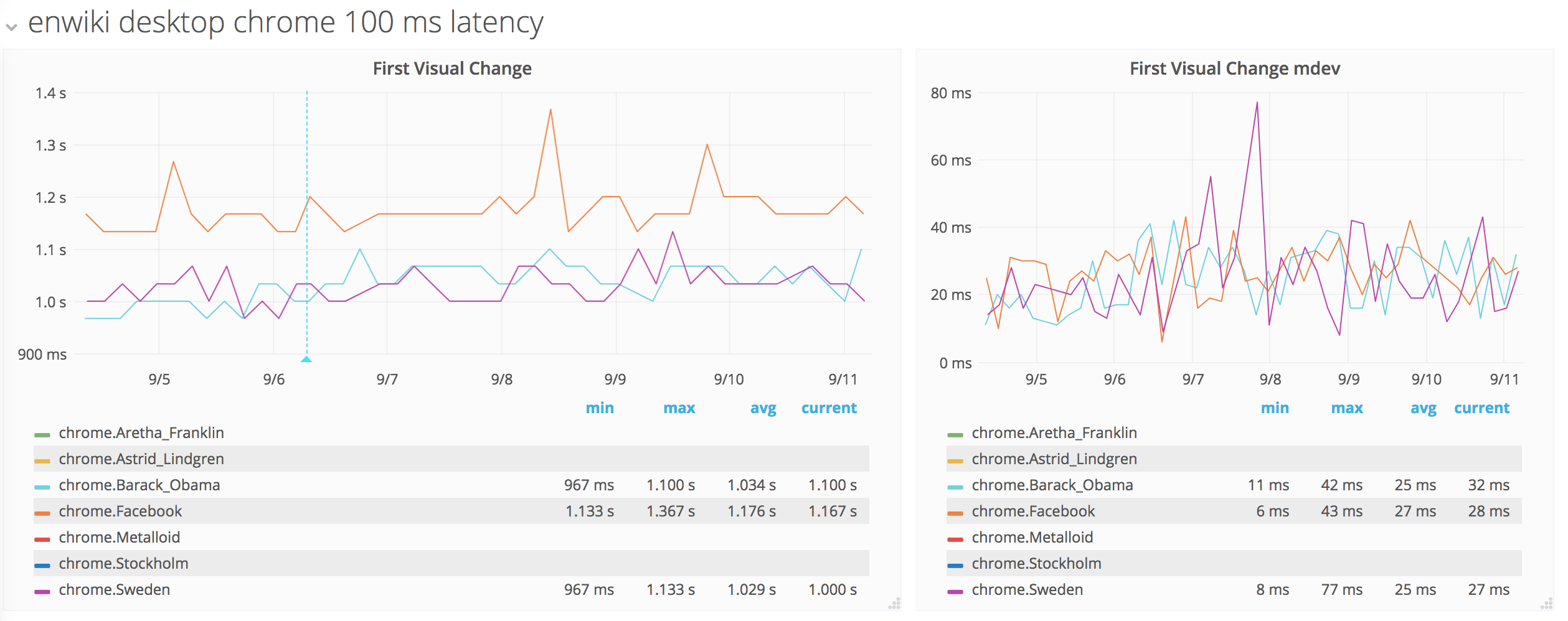

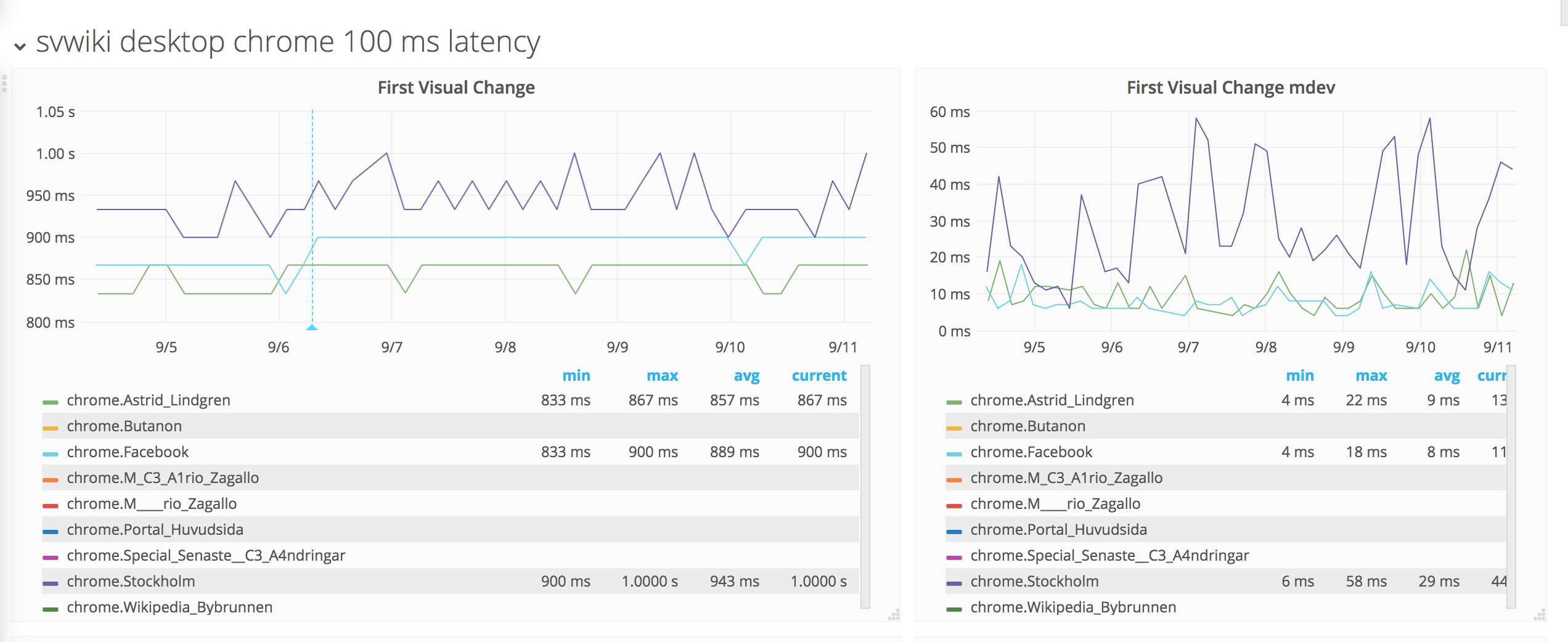

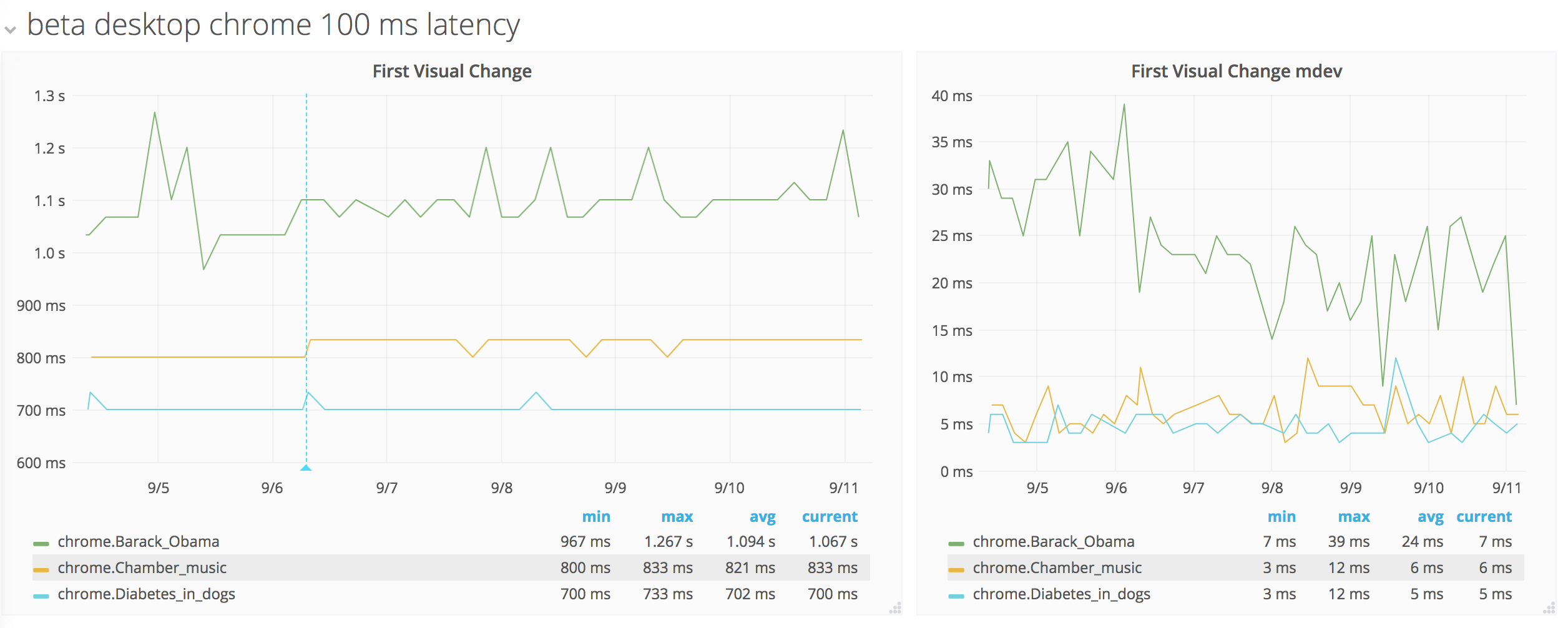

At the moment we run our testing on AWS c4.large, using WebPageReplay to replay our pages. When we run our tests on desktop with Chrome we can find regressions in first visual change that are 40 ms or higher. With Chrome we also use the Chrome trace log (where we can get CPU stats of where Chrome spends time) where we can find regressions that are 20 ms or higher. For example this setup helped us find a regression in Chrome 67 when the Chrome team changed their music/video player https://bugs.chromium.org/p/chromium/issues/detail?id=849108).

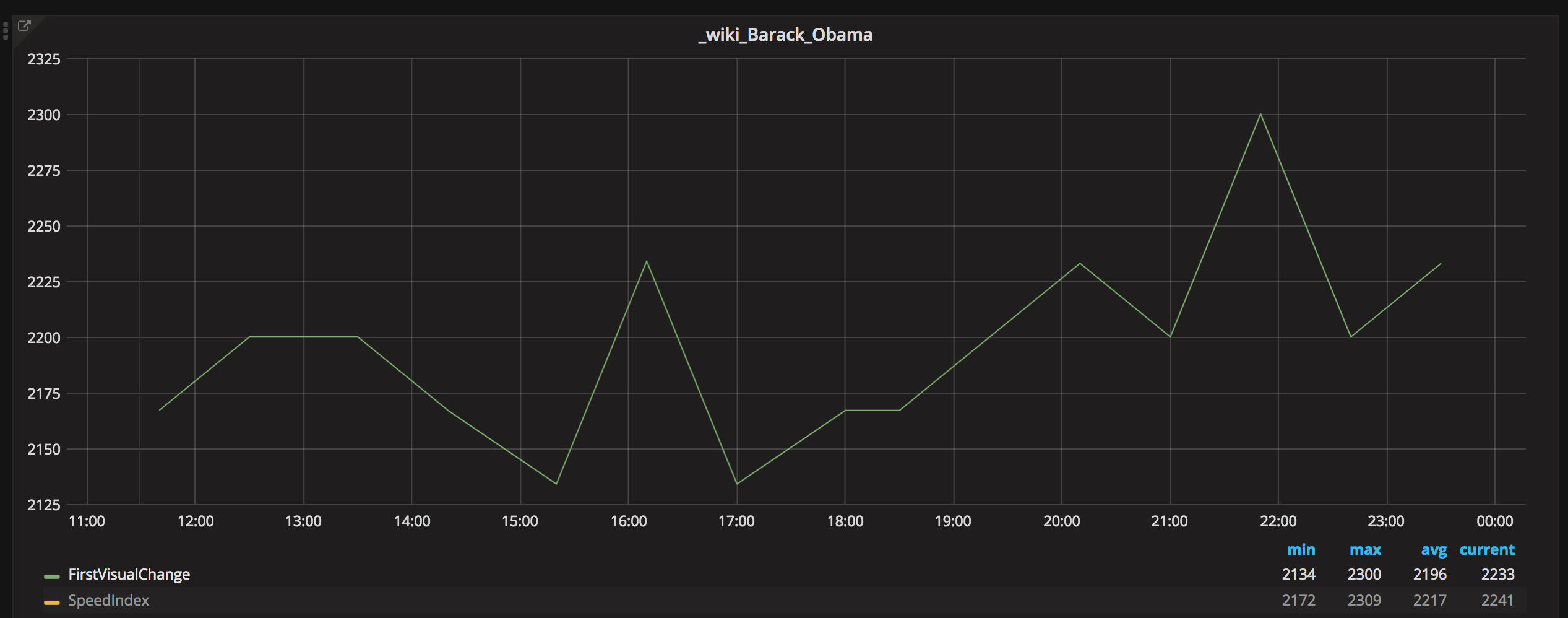

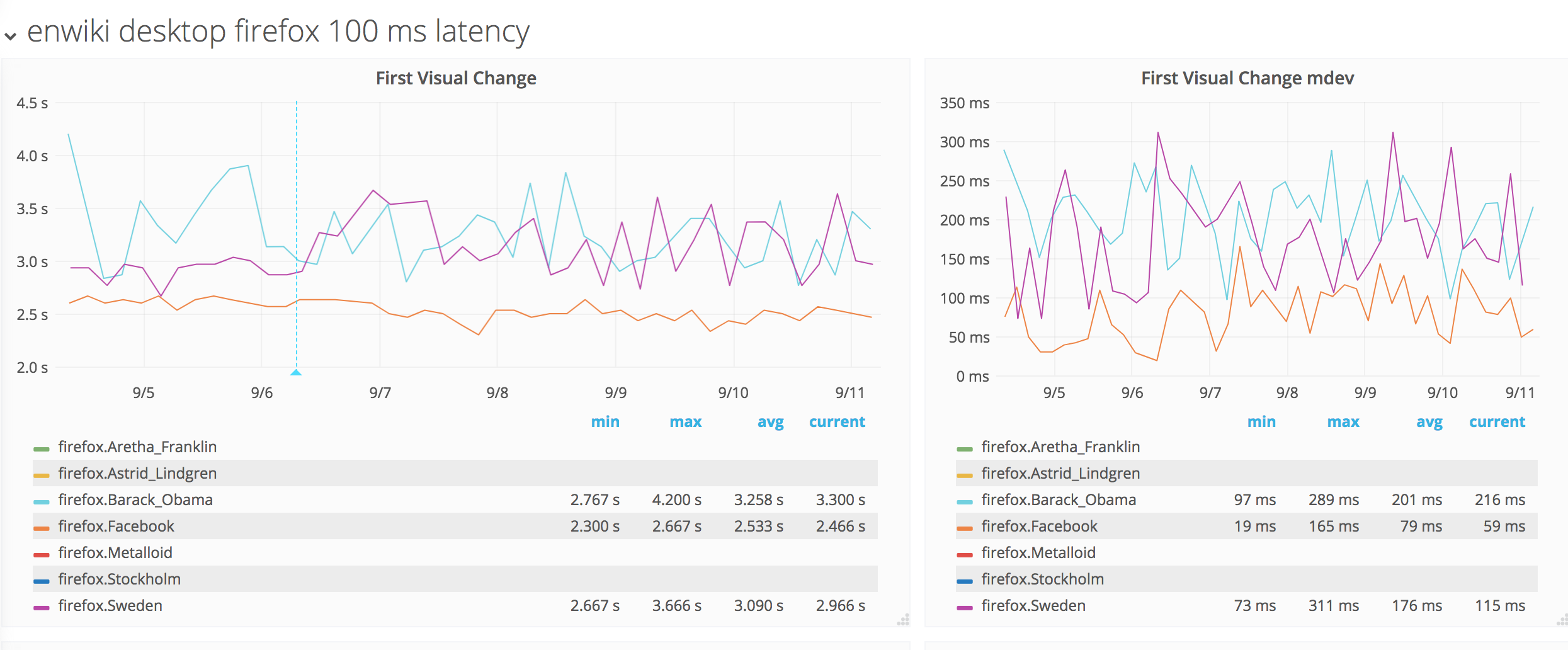

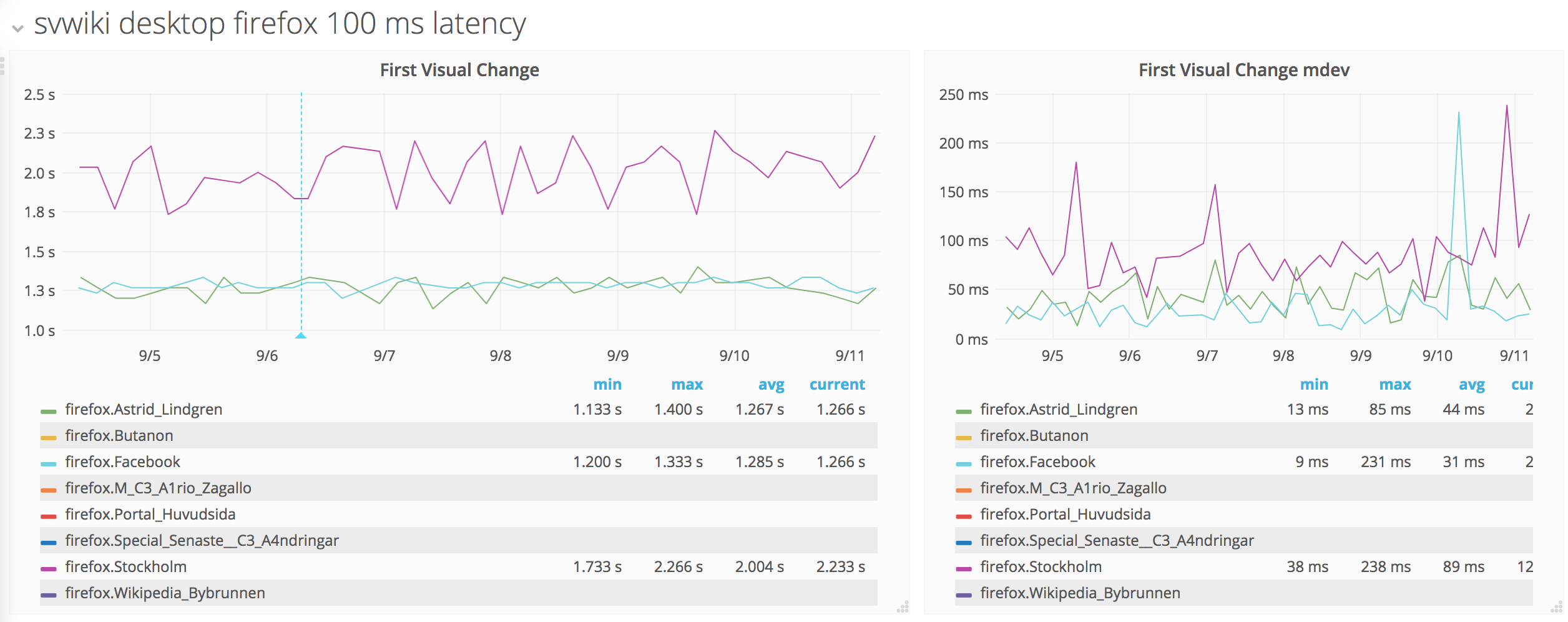

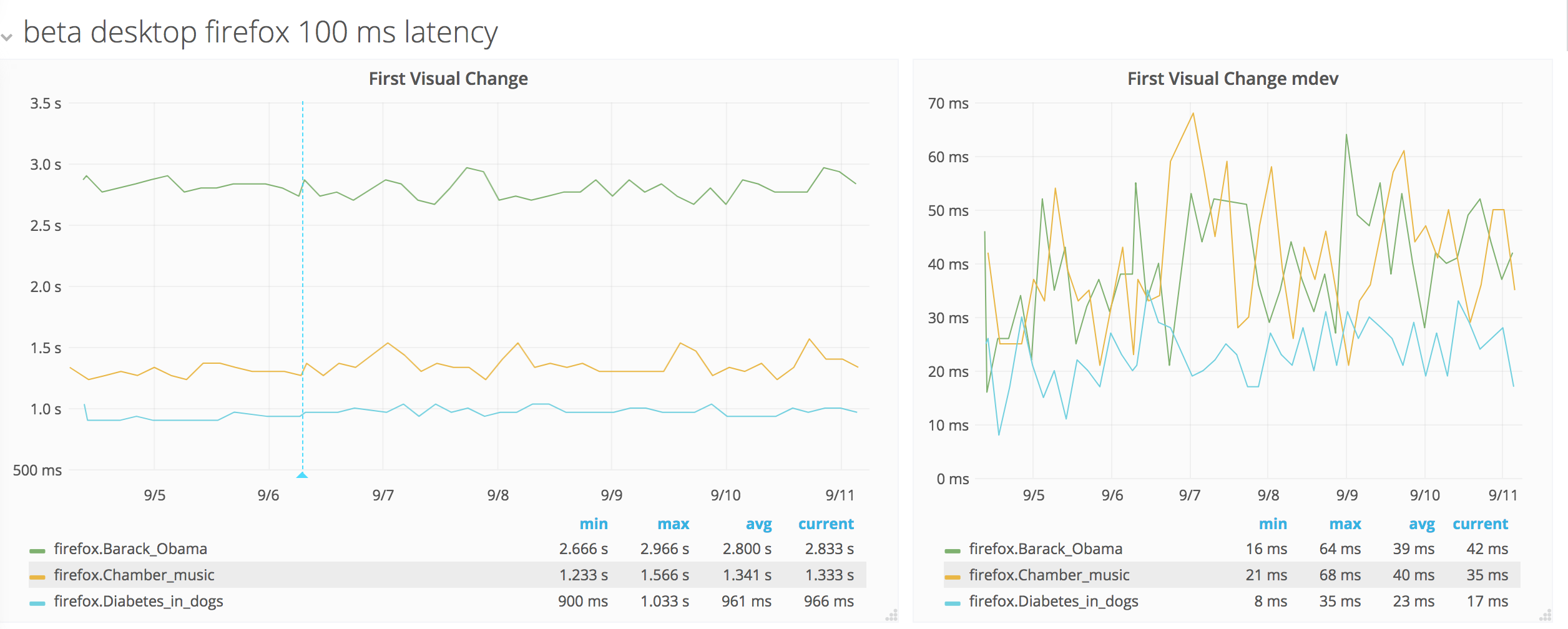

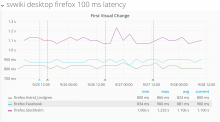

With Firefox we don't get that stable metrics so we can alert on them. Our alert threshold would then be something like 500 ms. We have turned off all the things (that we know about) that can make metrics with Firefox unstable (like the background update of 3rd party blacklists etc) but it doesn't help. Most of the pages for us have two buckets of metrics. For example if we make 11 test runs, X runs is fast and Y runs is slow (and the difference could be like 1 second, so it is a big diff). Using Chrome the diff is max 100 ms.

We see unstable metrics with WebPageTest without WebPageReplay too, so it seems not to be tool related. However some URLs are more sensitive than others, so content matters.

This could either be something special with Wikipedia pages that doesn't work well with Firefox, something in our Firefox setup that we do wrong with the tools, or a generic Firefox problem.