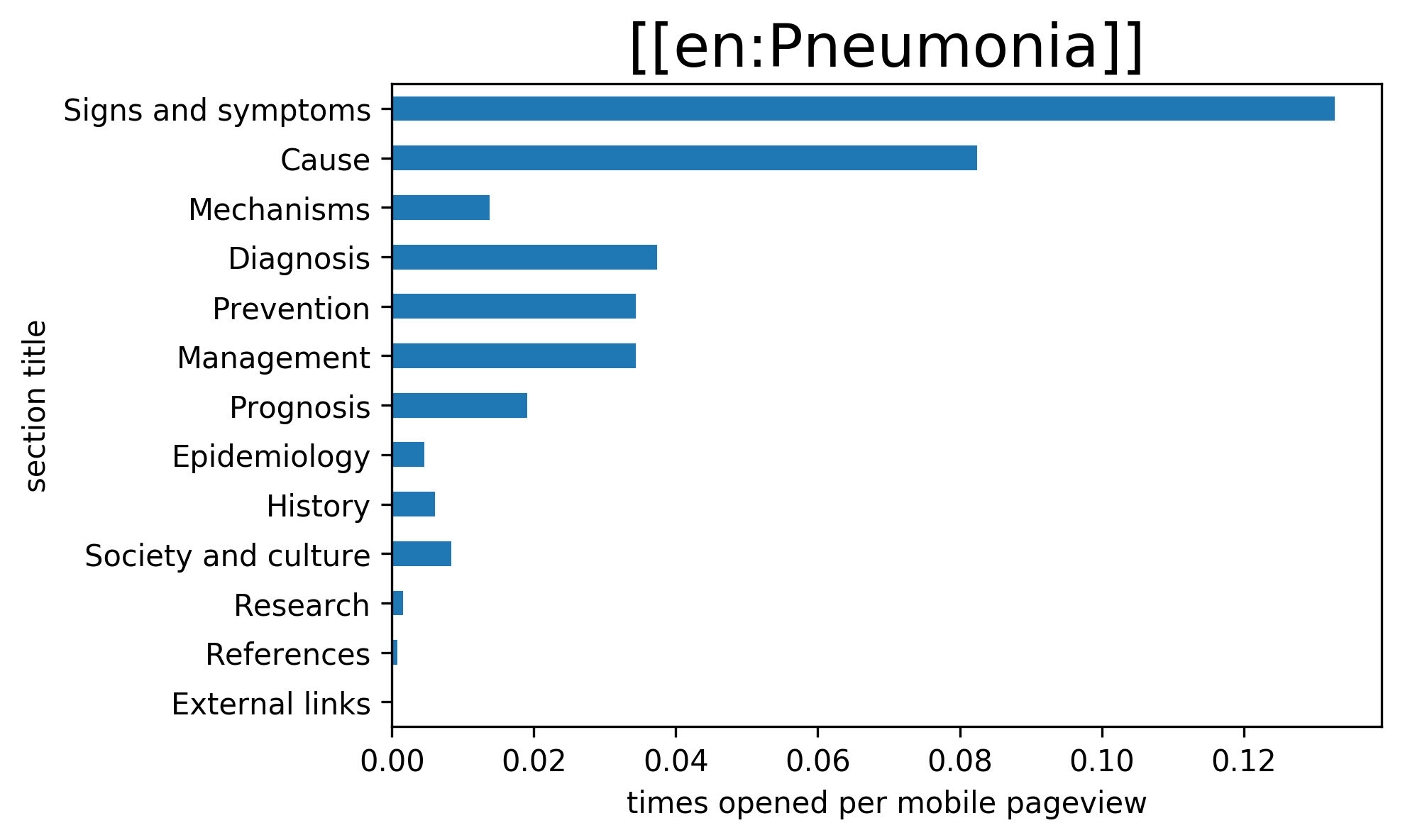

From the 2015/16 A/B test of collapsed/uncollapsed sections on mobile web, we got as a byproduct some very interesting data about the differing levels of interest by readers for the individual sections of a Wikipedia article, e.g.:

In the Q&A of @Tbayer's recent Wikimania presentation about this and related data, it was suggested to take this a step further to enable Wikipedia editors to test which of two alternative headings for a given sections works best for readers. @Doc_James gives the example deciding between "epidemiology" and "frequency" in articles about diseases.

Making this possible will likely involve the following parts:

- Reactivate Schema:MobileWebSectionUsage in some form, possibly in connection with the upcoming work on T199157: [Spike ??hrs] Sticky header instrumentation. Thanks to the new Hive-based EventLogging setup deployed last year, we should be able to use a higher sampling rate than back then, making this data usable on more (than just the highest-traffic) articles. It will likely still be too noisy for making solid conclusions about low traffic articles.

- Update Schema:MobileWebSectionUsage to consider the mobile setting that allows users to have sections expanded by default (as this will impact the initial state of section expanding/collapsing)

- Serve two different versions of a section heading as part of an A/B test, as specified by editors using e.g. a magic word or template (say {{SECTIONVERSIONS|Epidemiology|Frequency}} or such).

Developer notes

There are at least 3 tasks I (@Jdlrobson ) can see as part of this epic.

Restoring Schema:MobileWebSectionUsage

Code from client has long been removed (2 years ago)

https://gerrit.wikimedia.org/r/#/c/mediawiki/extensions/MobileFrontend/+/267729/

Since we have a reference point from doing this before, it should be easier to restore this code then it would be if we were to write it from scratch, but much of the code has changed since this patch. One would hope this to be a 5 point estimation.

Serve two different versions of a section heading as part of an A/B test straw man proposal

To run an A/B test against anonymous users, we are restricted to sample by page rather than user session.

I would thus recommend that we create a magic word to serve different headings.

Given a section heading "prognosis" editors would need to opt in certain pages into the A/B test like so:

== {{SectionAB:Prognosis}} ==On the server side we might render this magic word 20% of the time with "Prognosis" and 20% of the time "Likelihood of recovery". 60% of the time (for the control group), we would use the current treatment e.g. Prognosis. We would then let Schema:MobileWebSectionUsage know which group the user was opted into. From this we would be able to measure which section heading was more successful.

To allow editors to run arbitrary tests, we'd need some way to register tests I'd recommend doing so via a interface page e.g. MediaWiki:SectionABTest mapping section headings to alternative section headings.

Prognosis: Likelihood of recovery

Note we would need to ensure id and name attributes are retained so links continue to work.

client based solution?

Given the importance/visibility of sections for table of contents, collapsed headings and section linking within articles a client based solution that samples by user while viable has a few more complications - the client side table of contents and noticeable changes in the content of the page.

JS code only runs when the DOM is ready which can come quite late meaning there is high possibility of a jarring/visible update to the page and table of contents on tablet (if it has been opened).

This will particularly be problematic/unavoidable if a section link is opened as the browser will scroll to the heading prior to it changing.

We could possibly migitate this by finding a creative client+server solution which hides/blurs the section heading/content until the ab test has loaded.

Recommendation: Document A/B test results.

I (@Jdlrobson) would recommend any outcomes from A/B tests resulting from this work are documented in a special heading under https://www.mediawiki.org/wiki/Recommendations_for_mobile_friendly_articles to guide future editors.

Open questions

Section numbers

I'm not sure if it would impact the A/B test, but it's possible(likely?) that the further down the page a section is, the less likely it will be read.

Thus if we ran an A/B test on "Prognosis" vs "Likelihood of recovery" on a variety of pages where the section headings were positioned in different places, how would we remove noise relating to the position of the section heading ?