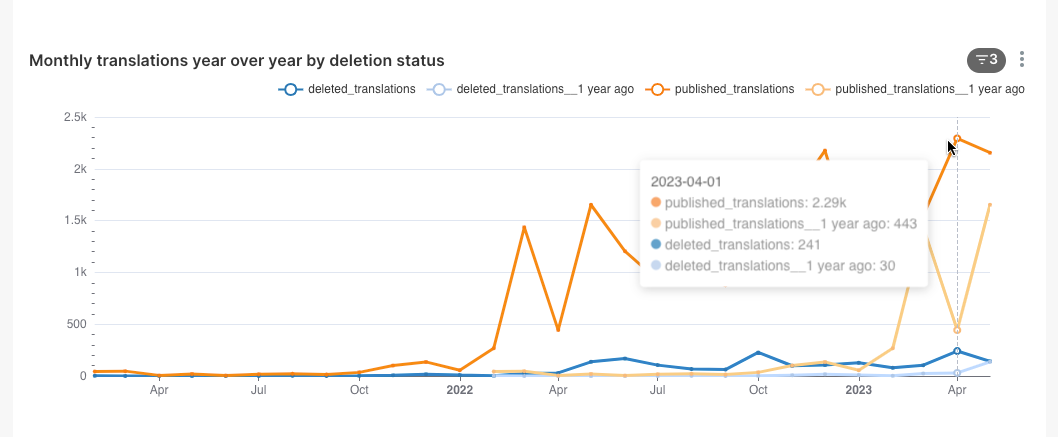

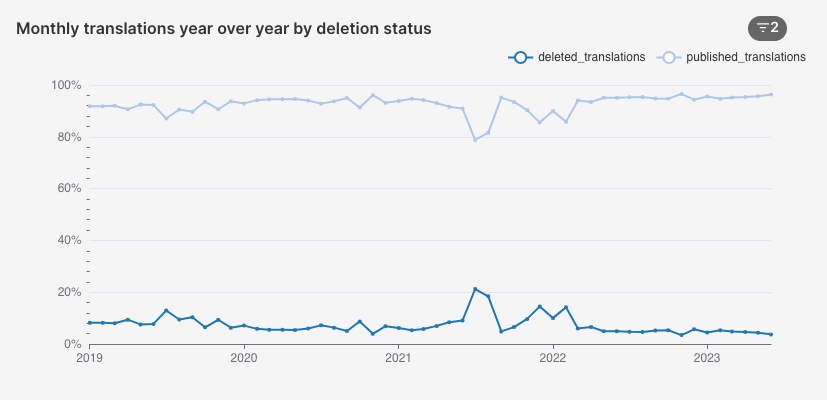

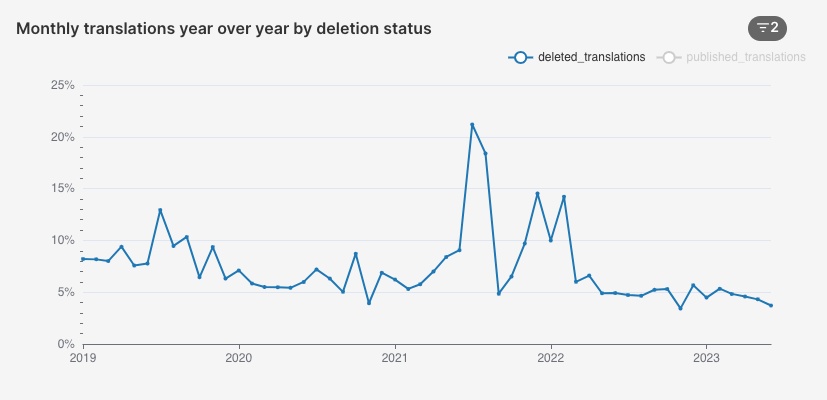

The Content Translation Key metrics dashboard shows in multiple graphs the translations published, but there is no information about how many of those got deleted.

To get a wider picture of what happens with the content created with Content Translation, we want to represent across all languages a information about "deleted translations" next to the published one, representing the translations that have been deleted.

This, combined with similar additions to depict translations "published needing review" (T209868) will provide more perspective on the quality of the content created, and the impact on it that the improvements on the tool may have.

That is, at a glance we can identify when most of the translations published were problematic, identifying peaks og deletions or translations needing review.