Based on the categories obtained in T242969, train image classifiers for commons based on pre-trained models

[if time allows] train from scratch using existing architecture

Description

| Status | Subtype | Assigned | Task | ||

|---|---|---|---|---|---|

| Open | Miriam | T155538 General image classifier for commons | |||

| Open | Miriam | T215413 Image Classification Research and Development | |||

| Invalid | Miriam | T228441 Design a pipeline for image classification | |||

| Resolved | Miriam | T242229 Test the feasibility of a classifier trained on Commons categories | |||

| Resolved | Miriam | T242970 A set of prototypes of image classifiers trained on images from Commons Categories |

Event Timeline

Weekly updates:

- Wrote the tensorflow code to train classifiers 10, 50 and 100 categories using Commons images. Two techniques for training:

- transfer learning from an Inception network trained on Imagenet

- training from scratch on a simple network (will switch to Inception or more complex networks later on)

- Made the first tests with transfer learning (technique 1) on 10 classes, with an average of 30k images per class. Early results on performances for 1000 training iterations:

- Training time is around 20 mins (excluding the forward step, which is a one-off step processing 100 images/sec.)

- Accuracy on test set is around 75%

- Now running the training for 10, 50, and 100 categories with 1000, 5000 training iterations. Will report results soon.

- One issue is that we found with @elukey is that the GPU can handle only one process at the time. So necessarily all these experiments above have to be serialized rather than parallelized. Once this set of experiments is done, we will upgrade the whole system and check if this problem persists.

Updates:

Training and testing of the classifiers based on pre-trained models concluded.

- Classifiers for 10, 50 and 100 categories trained with different parameters, see table results below. More comments on the results will be included in the final report (T242971)

- Put together some visual examples of the labels output for the 100 classes classifier, and for the 50 classes classifier. Scores below each image represent the likelihood that the image belong to the corresponding category.

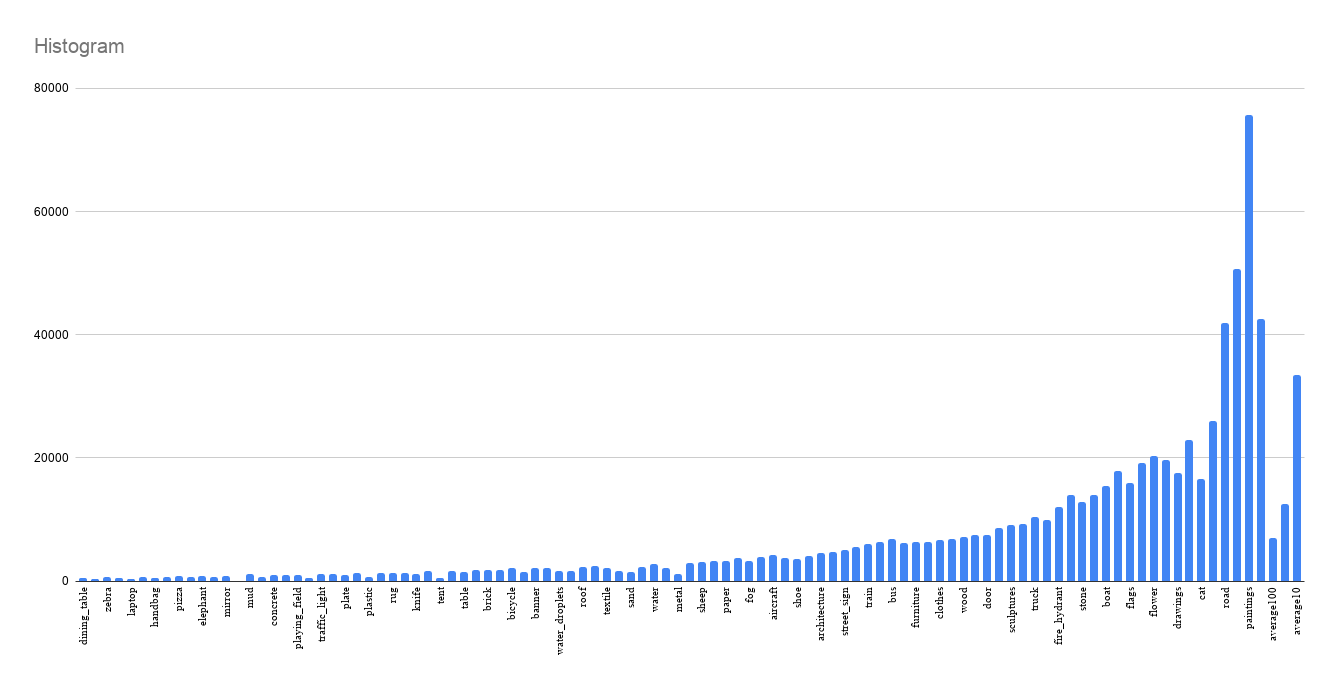

Also, a plot of the number of images per category to understand the reason behind some errors:

I could close this task but i'd like to train more classifiers from scratch before the end of Q2 to see if we can get some improvement.

Weekly update:

Worked on the infrastructure to train a simple model from scratch (i.e. no pretrained model). Built the architecture, loss function and the input/output workflows

Early results seem encouraging, though accuracy looked lower than the pre-trained model. This was probably due to some hyperparameters and/or to the simplicity of the architecture. I was about to test more, but stat1005 went down last Friday (see T247561). So until this is fixed I can't continue the tests. This is almost done as it's really about launching a script that loops over different hyperparameters and perfromrs training from scratch each time.

Weekly update:

Ran the first two models from scratch, for 3 and 10 categories. The model seems to be overfitting on the training set, now checking what's wrong. It is likely that this stretch goal is much broader than expected, it might require a dedicate goal for next quarter taking 10-15% of my time. Basically I need to design a much larger architecture and a broader experimental setup for this. I would like to discuss this, but I would tend to close this task for now and open a new task for the stretch goal.

Closing this task as per our discussion.

Writing report here: https://meta.wikimedia.org/wiki/Research:Prototypes_of_Image_Classifiers_Trained_on_Commons_Categories