In the parent task (T256081), @Miriam generated lists of image recommendations for six languages. In this task, the following people will evaluate the recommendations in the lists:

- enwiki: @MMiller_WMF

- frwiki: @Trizek-WMF & @Dyolf77_WMF

- arwiki: @Dyolf77_WMF

- viwiki: @PPham

- kowiki: @revi

- cswiki: @Urbanecm_WMF

I put the files in tabs in this spreadsheet: https://docs.google.com/spreadsheets/d/120ux_OPnqGWwrufgAvoBFBqDiPGquK4Xgd4UevLFuu0/edit#gid=778067505 (it is also possible to view all top images with their articles at once via this link). In our first pass, we will evaluate the first 50 articles in each list. I sorted the articles randomly so we are evaluating a representative group.

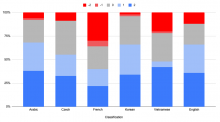

We'll classify the "top. image" into these categories, along with explanatory comments where useful:

| Classification | Explanation |

| 2 | Great match for the article, illustrating the thing that is the title of the article; e.g. the article is "Food" and it is an image of food. |

| 1 | Good match, but difficult to confirm for the article unless the user has some context, and would need a good caption; e.g. the article is "Food" and it is an image of a famous chef. |

| 0 | Not a fit for the article at all; e.g. the article is "Food" and the image is a car. |

| -1 | Image is correct for the subject, but does not match the local culture; e.g. the article is "Food" and the image is a specific food from a specific culture that is not recognizable in the local culture. |

| -2 | Misleading image that a newcomer could accidentally think is correct; e.g. the article is "Taco" and the image is a burrito. |