Feature summary:

On the participate page, we need to show depicts suggestions from the Metadata-to-Concept Module (currently in development, see sample requests and responses here).

These should display alongside the current Google Vision suggestions (only visible when the ?mv=true parameter is added to end of the URL).

They will work in the same way. Simply click on a suggestion to add it to the list of depicts changes. The user still needs to click save to actually add the statement.

See design here: T312873: Create mock designs for depicts suggestions from Google Vision and Metadata-to-Concept

Update 7 Oct 2022

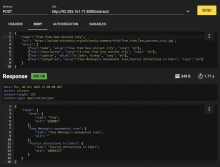

a first prototype of the metadata-to-concept API is now up and running on a virtual server listening on http://92.205.161.71:8080/extract/

It now has a basic concurrency model and should (in theory) be able to handle multiple requests simultaneously.

The attached screenshot should be self-explanatory, nevertheless here are a few notes:

- The keys in the first level JSON dict are: name, url, attrs. All of them are mandatory. The name and url keys must be strings, the attrs key must be a list of dicts.

- At the moment name and url are ignored.

- All attributes used to derive concepts must be declared in the attrs list. Each attribute has a key, a value and lang. Lang is currently ignored and defaults to "en". One should always add the attribute name (= file name) as a fallback in the list.

- At this point the attribute types name, description, caption and categories are processed. Categories must be a semicolon-separated list of wiki categories.