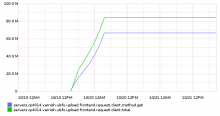

Graphite currently has a couple of reqstat. metrics, that are generated via the udp2log stream via a Perl script under puppet:./files/udp2log/sqstat.pl. Despite the crudeness, the metrics are extremely useful, especially the error ones; we plot them under http://gdash.wikimedia.org/dashboards/reqerror/ but, more recently, we also have a check_graphite alert that alerts us to spikes in errors.

Unfortunately, while we get alerted for errors, investigating them is hard. The process usually is to login to oxygen and check 5xx and try to find patterns there, such as "all esams servers". Additionally, because the udp2log stream contains the stream from frontends and because frontends retry on backend failure, there's the possibility for hidden 5xx responses that we never see, but which increase latency.

The whole mechanism should be rewritten and remade from scratch. We should have separate metrics under the graphite hierarchy, e.g. reqstats.eqiad.cp1062.backend.5xx and then show aggregated graphs in gdash using wildcards but which we can zoom in to, when there's a problem. The metrics can be collected either centrally, using a udp2log (or kafka) consumer, or they can be collected locally on the box, using a varnishncsa/VSL collectors. The former currently lacks backend logs, but maybe we should eventually start collecting these as well as they can be useful for finding hotspots.