@dancy it would be great if someone could finish this soon. While scap now does have an option to mitigate potential helm hiccups, I think we should add it to the mix nevertheless

- Queries

- All Stories

- Search

- Advanced Search

- Transactions

- Transaction Logs

Advanced Search

Tue, Apr 16

Mon, Apr 15

Thu, Apr 11

Built and repackaged

Built and uploaded

Tue, Apr 9

In T361724#9690204, @dancy wrote:There is a tmux/screen check for scap stage-train, but nothing else. This could be factored out to cover other scap subcommands.

Suggestions:

- scap backport

- scap deploy

- scap deploy-promote

- scap sync-*

- scap train

- scap stage-train

- scap lock

Applying the tmux/screen check for all scap subcommands (especially those unrelated to deployment) is definitely undesirable.

Thu, Apr 4

Wed, Apr 3

We depooled mw-web-ro from eqiad, and attempted a rollback

Tue, Apr 2

In T360596#9676049, @akosiaris wrote:My 2, operationally minded, cents says to wait for the dust to settle a little bit before moving forward with any plan. We got time and I have my doubts regarding all 4 different forks surviving.

Thu, Mar 28

Wed, Mar 27

Switchover is done, it is Day 8, and we are back to Multi-DC. Thank you serviceops and @akosiaris for being good teammates and keeping an eye on things.

Tue, Mar 26

Thu, Mar 21

Mar 20 2024

Mar 19 2024

This is done, weill reopen if something goes south

Mar 6 2024

Mar 5 2024

mw-mcrouter ds has been deployed on staging mw-mcrouter staging

Mar 4 2024

Looks alright!

@Trizek-WMF as per our off-phabricator discussion, the major change is that this is not a procedure we test anymore, but it has become standard practice. Please edit the message as you see fit to reflect that.

Feb 29 2024

Feb 27 2024

Looking into the issue, we found that around 26th Feb @ ~21:45 UTC, the urldownloader1003 (ganeti VM running on ganeti1027 ie cluster master) lost network connectivity

This was most likely related to T358597

After issuing a restart, the VM came back to life normally.

Feb 23 2024

Feb 22 2024

Feb 21 2024

Feb 14 2024

Things are progressing well after the last change, please reopen if this resurfaces. Shoutout to @akosiaris for lending a hand

In T356766#9537892, @kostajh wrote:In T356766#9537874, @jijiki wrote:

- meanwhile, we restarted the envoyproxies, which seems to have significantly improved the issue; 503s for now are around 2 per hour

Hmm, I am not seeing that reflected here https://logstash.wikimedia.org/goto/d99b2beddeb28d71ddc74e7298c69cc8

Feb 13 2024

- tcpdump shows that upstream sends an RST, but nothing else useful

In T356766#9534550, @kostajh wrote:In T356766#9534518, @jijiki wrote:so far:

- dumped traffic at the pod level (ipoid has only one pod, service is low traffic), and I never saw a packet from an appserver whic

- from the pod's perspective, there are no 500x errors grafana envoy-telemetry-k8s

- nothing standing out on lvs2013

- nothing odd on the k8s node itself

Is there something more we could log from the MW side that would help debug this? Is it possible there is some special routing happening because the originating request to ipoid happens in a POST request context (so it always originates from the primary DC)?

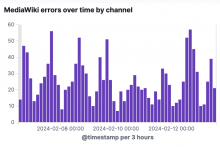

Feb 12 2024

so far:

Feb 8 2024

I set the host as inactive since I noticed a bit of log spam on lvs2013