User Details

- User Since

- Feb 17 2017, 7:18 PM (374 w, 6 d)

- Availability

- Available

- LDAP User

- Jsn.sherman

- MediaWiki User

- JSherman (WMF) [ Global Accounts ]

Today

pulling this back off our kanban since there hasn't been any followup from review in quite some time. @Soda I'm happy to re-review if/when you pick this back up.

Hi there, @TAdeleye_WMF asked if I could provide code review support on this, which I'm happy to do whenever there is a patch against the master branch. However, I don't have a clear way to reproduce the issue against master. Could @dom_walden or @Driedmueller offer me (a person with mediawiki experience, but no subject matter expertise for SVT polls) a concise way to reproduce the "declare winners" issue locally? I updated STVTallierTest_drw.php to the point where unit_test_stv.sh, but it eventually dies out (I'm assuming due to memory issues). Maybe an updated/smaller test file? The patch looks good on a read through, but wouldn't be comfortable giving a +2 just based on that. It looks like there are some good findings here from live testing, but most of the discussion about that seems to be dealing with the rounding error.

Creating this schema was premature. T356100: Determine method for tracking edits that have been checked but not reverted by AutoModerator doesn't have an associated feature designed for it. We should close it out as declined once we merge in the patch to remove the schema.

Yesterday

Tue, Apr 23

[...]

- issues requiring intervention: as an example, we can't always merge the "undo" content if the edit to be reverted is no longer the most recent by the time we try to revert it. When this happens, we will want to surface it for manual revert by a moderator. The UX for that doesn't exist yet but it's a thing we know we'll need.

[...]

Per our research, we believe that AutoModerator will only attempt ~7 reverts daily with our "very cautious" pilot settings. For now, we'll just log problems within that set of revert attempts.

Thu, Apr 18

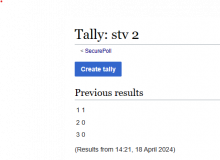

I pulled this down for a more thorough code review today, and realized that I'm not clear what the actual acceptance criteria is here. From the description, I would expect garbled tally output for STV poll tallies, but the output from master seems fine?

I double checked in the cli:

sh $ docker compose exec mediawiki bash -c "php extensions/SecurePoll/cli/tally.php --name 'stv 2'"

Wed, Apr 17

I wanted to note that the new toolbar ui (currently behind the pagetriage_tb url parameter) replaces draggable() already. You can access it by following review links from the feed with the parameter set: https://en.wikipedia.org/wiki/Special:NewPagesFeed?pagetriage_tb=new. It's a partial vue migration that should work as a drop in replacement for the current one.

see T340117 for more info

Tue, Apr 16

[...]

- observability: we want moderators to be able to see/validate that it is working in cases where it is not reverting edits. There isn't a clear feature designed around this for the pilot. Perhaps just observability into the overall activity of the extension would be adequate for now.

noting that we can look at growth experiments extension page for json config notes

Mon, Apr 15

@Ladsgroup, thank you for looking at this.

Fri, Apr 12

[...]

Oh interesting - is DisableAnonTalk used on any production WMF wikis? I don't see it in CommonSettings or InitialiseSettings but not sure if I should be looking elsewhere.

[...]

I am not aware of it's use on any of our wikis.

[...]

Given this, the skip for revision-deleted edits, and seemingly no good reason to differentiate anon edits, I think we're back to a single edit summary?

Thu, Apr 11

[...]

Susana just mentioned to me that we're currently skipping edits where the username/edit has been revision deleted. It makes sense to me, actually, because if revision deletion is happening an admin has looked at the edit and is already taking moderation actions on it - if the edit should be reverted we can trust that they're also going to take that action.

[...]

In terms of imported edits there is again a potential special case, since we need to generate an interwiki link. But would Automoderator ever be reverting an imported edit? i.e. do edits generated via Special:Import trigger Automoderator review? If not we could remove these cases from consideration.

Fri, Apr 5

We'll need to check this next week, but I added really basic templating in my WIP patch

Okay, it sounds like the spike is still warranted. The way we do this now may end up getting thrown out later, but I don't think that's a big deal. For now I'll implement the config string as requested along with the edge case checks that I mentioned in earlier comments.

merged!