Two previous posts - Exempla Docent Part 1 and Part 2 outlined QA approach to testing functionality of the Suggested edits (SE) module on Special:Homepage. Part 1 explored testing ORES model-articletopic logic implementation and Part 2 described testing user workflows.

This post, as a Part 3 of Exempla Docent, presents another important aspect of QA work - the task of testing instrumentation. While analyzing data is a complex task which would be addressed by data analytics, there are still quite a few points that would be important to check before the instrumentation would be deployed to production. Let's look at a specific example - Schema:HomepageModule on Special:Homepage.

Note: Special:Homepage with the SE module is enabled by default for most of the new accounts on participating wikis, e.g. testwiki, cswiki, svwiki, or arwiki. On betacluster Special:Homepage is enabled not only on the mentioned wikis but also on enwiki. For existing user accounts Special:Homepage can be enabled via Special:Preferences option Newcomer homepage in User profile tab settings.

The Step zero should be, of course, getting a good understanding of the user workflows on the Special:Homepage. Understanding First Day - full user flow gives a good overview of that. EventLogging/TestingOnBetaCluster documentation page is also a good reference point for getting in-depth info on EventLogging on the betacluster.

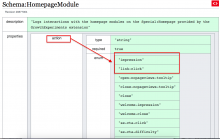

Now, the Step one would be going through Schema:HomepageModule page. The page has full information on the actions that are included in the schema along with some description and other helpful info (e.g. impression: Module is shown to user or link-click: User clicks a link in the module). These descriptions are basically ready-to-go tests.

| Schema | Action to check | Tests | Description |

|---|---|---|---|

| impression | Test 1 | when a page loads, the impression event should be recorded for each module | |

| link-click | Test 2 | click on a link(s) present in the modules | |

Step two - using the test scenarios from Step one, perform actions on the Homepage. The browser dev tools console allows us to see the recorded events, e.g. testing Schema:HomepageModule includes the following steps:

- enable your browser's dev tools and enable "persistent log" in the Console

- use the following snippet (credit to @kostajh)

mw.trackSubscribe('event.HomepageModule', console.log);

- perform the actions that trigger eventlogging and compare with the info documented in the relevant schema description

As a result, the Console will display all recorded events with detailed information - e.g. see the info for the impression event:

Step four - quite often it makes sense to check the logs directly. For the server-side schemas, e.g. EditorJourney, it is, in fact, the only option for checking if the events are being recorded. The instructions include the following simple steps:

- log in and go to the directory with logs:

ssh deployment-eventlog05.eqiad.wmflabs

cd /srv/log/eventlogging

- to verify that events have been properly logged you may use grep to find specific records. For example, to see which editor_interface events were recorded, you may use:

grep '"schema": "HomepageModule"' all-events.log|grep 'impact'|grep 'link-click'|grep '"is_mobile": true'

What can be checked on the betacluster? The tests should verify, at the very basic, that

- there are no errors in the recorded events

- all actions and all info (according to a schema description) are present

- no missing/duplicate actions

- and, overall, the logic of tested user workflows is reflected accurately in the recorded events

Even with limitations of betalabs environment, checking instrumentation (the schemas) before deployment to production might give valuable insights and allows to catch bugs and errors or to modify, if it's necessary, the schema itself. In the context of Growth team projects the instrumentation plays a crucial role for answering important questions on how features are being used and to make decisions on how to improve the Special:Homepage, so newcomer editors would easily find editing tasks and successfully publish their first edits.

- Projects

- Subscribers

- kostajh

- Tokens