The secret of getting ahead is getting started. The secret of getting started is breaking your complex overwhelming tasks into small manageable tasks, and then starting on the first one.

—Mark Twain

Prep work

Search is sine que non - a necessary component for making information accessible, so users and communities across wikimedia projects could effectively "share in the sum of all knowledge". Since Special:MediaSearch offers a new way of searching media (image, audio, video) and other files and pages, it is critical to have a thorough QA analysis of how well the new search answers users' expectations. However, the question of what makes the search efficient or whether the search works well at all is not an easy one. For example, the Measuring User Search Satisfaction research has found the quality of the search performance quite challenging to assess:

"On desktop alone, 64 million searches are made a day [...]. This makes measuring how well the system is doing of paramount concern, and we don't currently have a good way of doing that."

Well, what exactly could be tested then? What aspects of the search functionality are to be tested and how they should be tested?

Following the first part of Mark Twain's advice - The secret of getting ahead is getting started - we can start with understanding of what MediaSearch is supposed to do. The following description (see Commons:Structured data/Media search) gives a concise review of the context for MediaSearch and, more importantly, it gives specific guidelines for starting to map the goals for the high-level QA analysis:

Special:MediaSearch is an alternative, image-focused way to find media on Commons that is being developed. MediaSearch uses categories, structured data and wikitext from Commons, and Wikidata to find its results.

Looking closely at the description and breaking it into smaller chunks (following the next part of Mark Twain's advice -The secret of getting started is breaking your complex overwhelming tasks into small manageable tasks), the QA analysis for the future testing gets some specificity. The table below maps specific parts of the description to the high-level QA tasks.

| Description | QA analysis |

|---|---|

| "an alternative way to find media" | Special:MediaSearch should be compared with Special:Search to see how the new search functionality performs. |

| "image-focused way" | It would be important to see how images are displayed - image quality, the positioning of images, images' meta info etc. |

| "MediaSearch uses categories, structured data and wikitext" | The testing should be segmented into clusters of tests based on overall search functionality. |

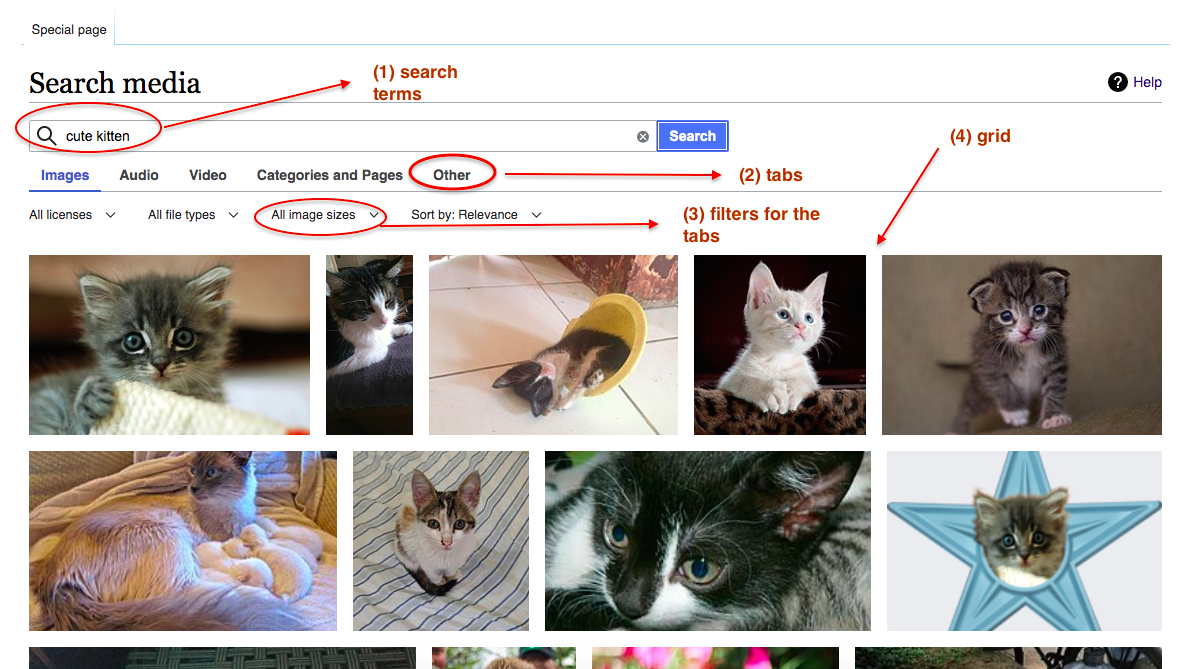

Now the Special:MediaSearch page looks as a test map with the areas for testing:

Testing search

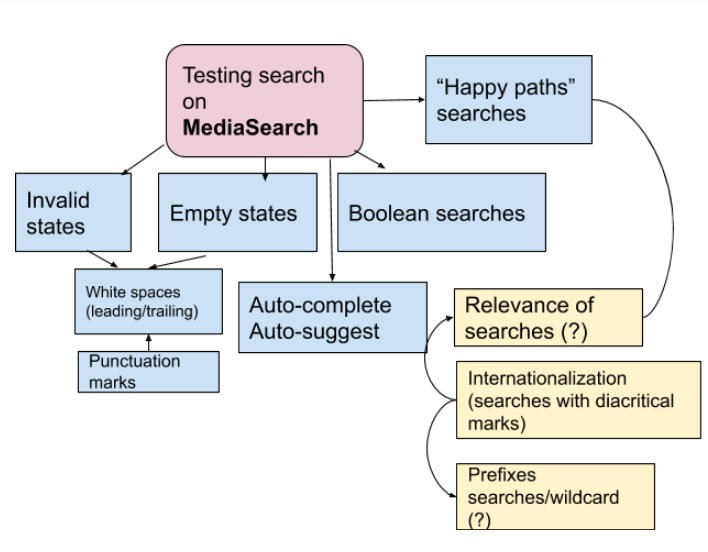

After breaking the big task (the task of testing Special:MediaSearch page functionality) into manageable tasks, we need to start on the first one - testing the search. The drawing below provides an overview of segmenting the search test cases. The segmentation has resulted from reading documentation - Help:Searching and Help:CirrusSearch and from previous experience with testing the search functionality for the wikipedia apps.

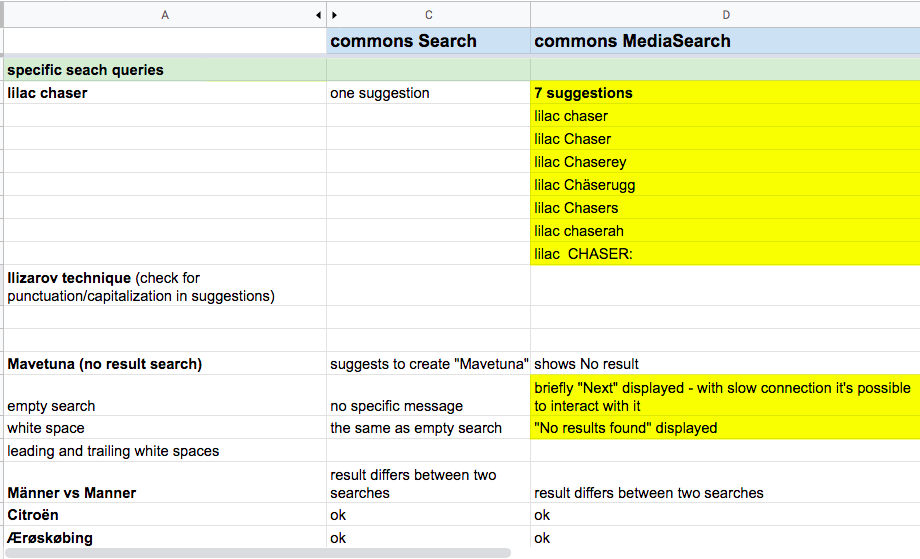

After identifying the segments, the next two steps are writing the specific test cases in a google spreadsheet document and getting them reviewed by the Structured Data team developers. The reviewing step is vital to make sure that all important search options would be tested. The spreadsheet with test cases acted as a working document not only for storing the test cases, but for comparing the MediaSearch test results to Special:Search testing and for communicating the test results back to the team. The screenshot below shows the part of the tests spreadsheet with the test cases (for Search and MediaSearch). The highlighted cells and notes indicate that the results should either be discussed or followed up with more testing.

Although testing was primarily focused on comparing search functionality between Search and MediasSearch, some UX issues resurfaced as well. For example, there were inconsistencies in auto-completing/auto-suggesting behavior, and couple of performance issues needed attention as well as some issues with UX - e.g. empty search communication, the behavior of the cancel button (the 'x' button) etc.

The prep QA analysis - reading the documentation, creating testing segmentation and test cases discussion - paid off. Testing search functionality on Special:Media Search page has helped to increase understanding of what users should expect from the new search. As a result, the new search - MeidaSearch - is efficient and robust, and it serves the users' expectations better.

- Projects

- None

- Subscribers

- None

- Tokens