How’d we do in our strive for operational excellence last month? Read on to find out!

Incidents

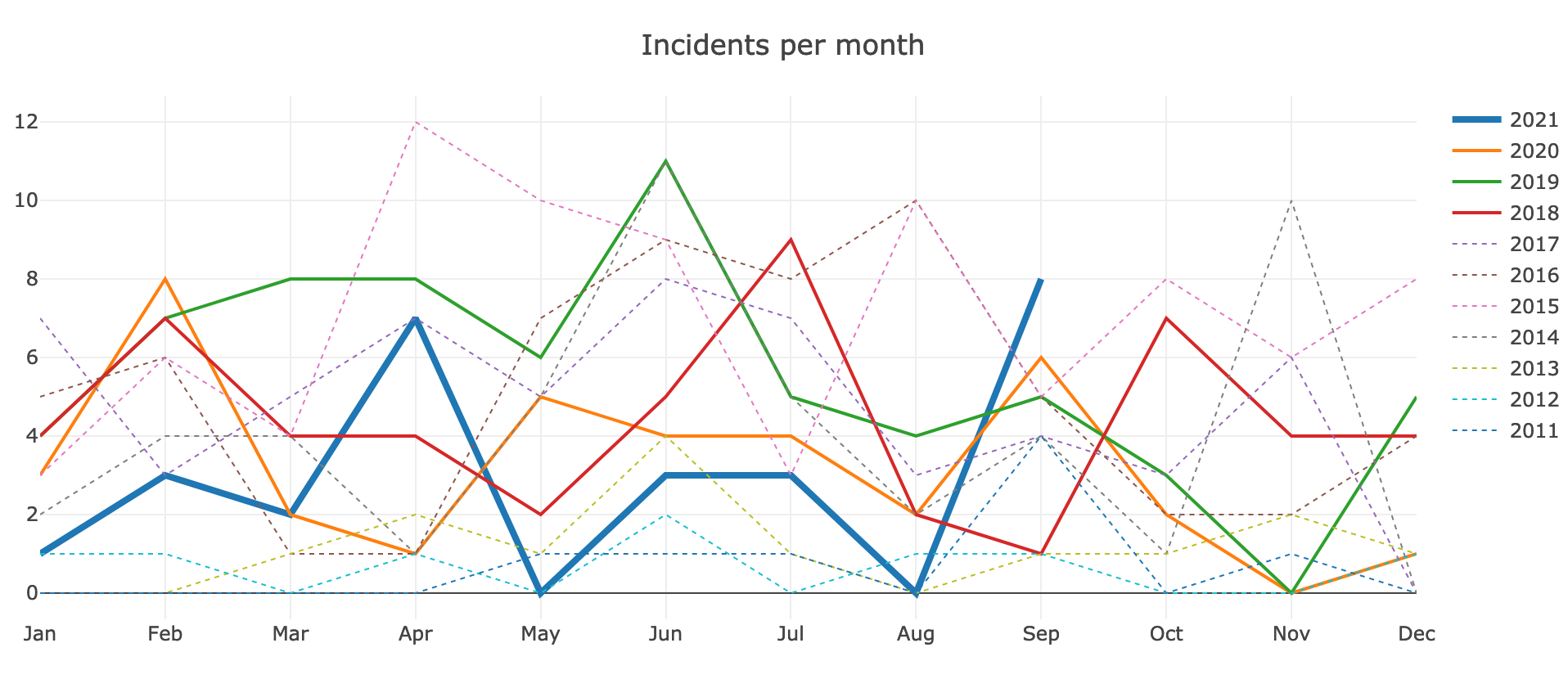

We've had quite an eventful month, with 8 documented incidents in September. That's the highest since last year (Feb 2020) and one of the three worst months of the last five years.

- 2021-09-01 partial Parsoid outage

- Impact: For 9 hours, 10% of Parsoid requests to parse/save pages were failing on all wikis. Little to no end-user impact apart from minor due to RESTBase retries.

- 2021-09-04 appserver latency

- Impact: For 37 minutes, MW backends were slow with 2% of requests receiving errors. This affected all wikis through logged-in users, bots/API queries, and some page views from unregistered users (e.g. pages that were recently edited or expired from CDN cache).

- 2021-09-06 Wikifeeds

- Impact: For 3 days, the Wikifeeds API failed ~1% of requests (e.g. 5 of 500 req/s).

- 2021-09-12 Esams upload

- Impact: For 20 minutes, images were unavailable for people in Europe, affecting all wikis.

- 2021-09-13 CirrusSearch restart

- Impact: For ~2 hours, search was unavailable on Wikipedia from all regions. Search suggestions were missing or slow, and the search results page errored with "Try again later".

- 2021-09-18 appserver latency

- Impact: For ~10 minutes, MW backends were slow or unavailable for all wikis.

- 2021-09-26 appserver latency

- Impact: For ~15 minutes, MW backends were slow or unavailable for all wikis.

- 2021-09-29 eqiad kubernetes

- Impact: For 2 minutes, MW backends were affected by a Kubernetes issue (via Kask sessionstore). 1500 edit attempts failed (8% of POSTs), and logged-in pageviews were slowed down, often taking several seconds.

Remember to review and schedule Incident Follow-up work in Phabricator, which are preventive measures and tech debt mitigations written down after an incident is concluded.

Image from Incident graphs.

Trends

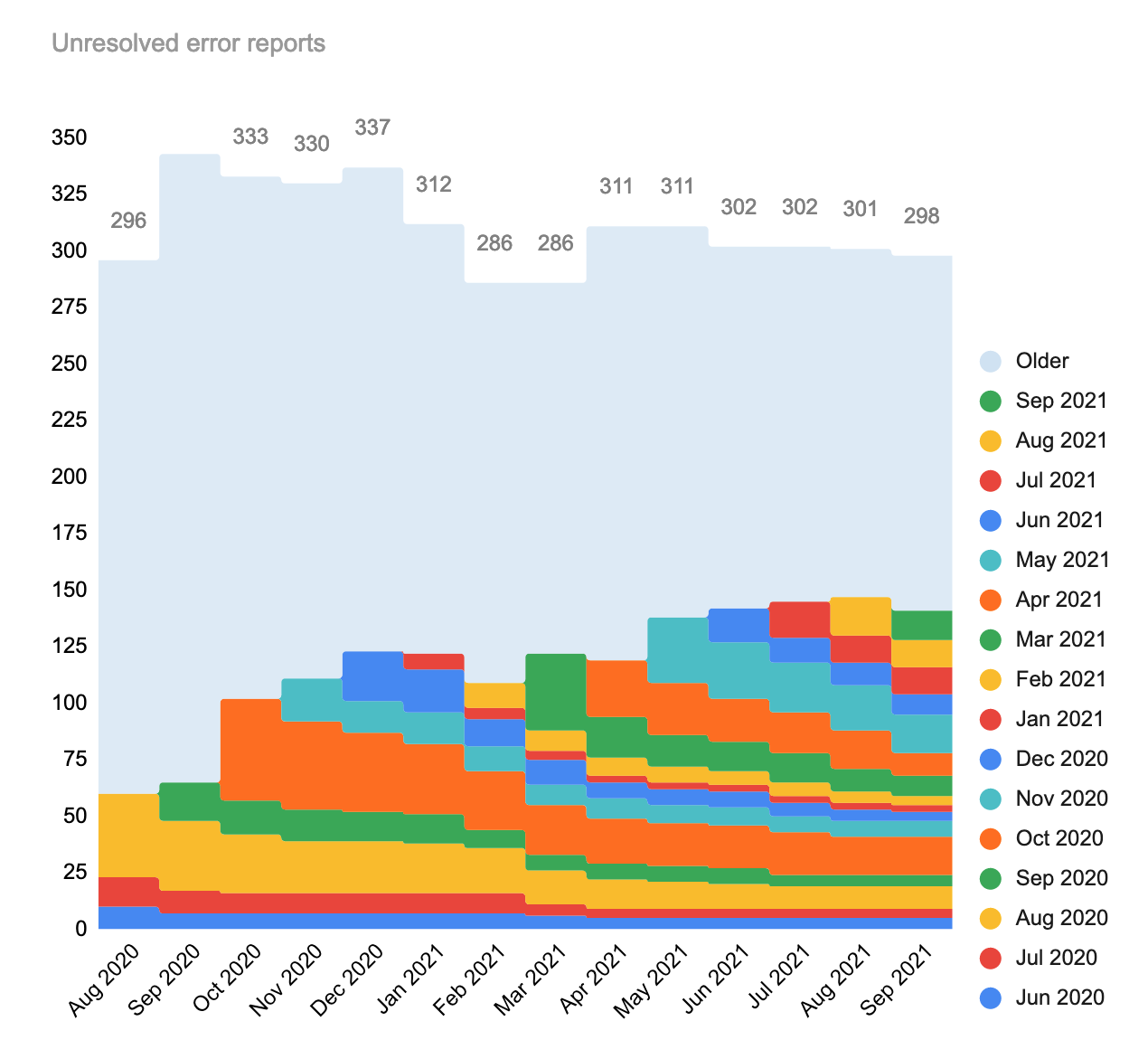

The month of September saw 24 new production error reports of which 11 have since been resolved, and today, three to six weeks later, 13 remain open and have thus carried over to the next month. This is about average, although it makes it no less sad that we continue to introduce (and carry over) more errors than we rectify in the same time frame.

On the other hand, last month we did have a healthy focus on some of the older reports. The workboard stood at 301 unresolved errors last month. Of those, 16 were resolved. With the 13 new errors from September, this reduces the total slightly, to 298 open tasks.

For the month-over-month numbers, refer to the spreadsheet data.

Did you know

- 💡 The default "system error" page now includes a request ID. T291192

- 💡 To zoom in and find your team's error reports, use the appropriate "Filter" link in the sidebar of the workboard.

Outstanding errors

Take a look at the workboard and look for tasks that could use your help.

Summary over recent months:

| Jan 2021 (50 issues) | 3 left. Unchanged. |

| Feb 2021 (20 issues) | 5 > 4 left. |

| Mar 2021 (48 issues) | 10 > 9 left. |

| Apr 2021 (42 issues) | 17 > 10 left. |

| May 2021 (54 issues) | 20 > 17 left. |

| Jun 2021 (26 issues) | 10 > 9 left. |

| Jul 2021 (31 issues) | 12 left. Unchanged. |

| Aug 2021 (46 issues) | 17 > 12 left. |

| Sep 2021 (24 issues) | 13 unresolved issues remaining. |

| Tally | |

|---|---|

| 301 | issues open, as of Excellence #35 (August 2021) |

| -16 | issues closed, of the previous 301 open issues. |

| +13 | new issues that survived September 2021. |

| 298 | issues open, as of today (19 Oct 2021). |

Thanks!

Thank you to everyone who helped by reporting, investigating, or resolving problems in Wikimedia production. Thanks!

Until next time,

– Timo Tijhof

- Projects

- None

- Subscribers

- None

- Tokens