How are we doing in our strive for operational excellence? Read on to find out!

Incidents

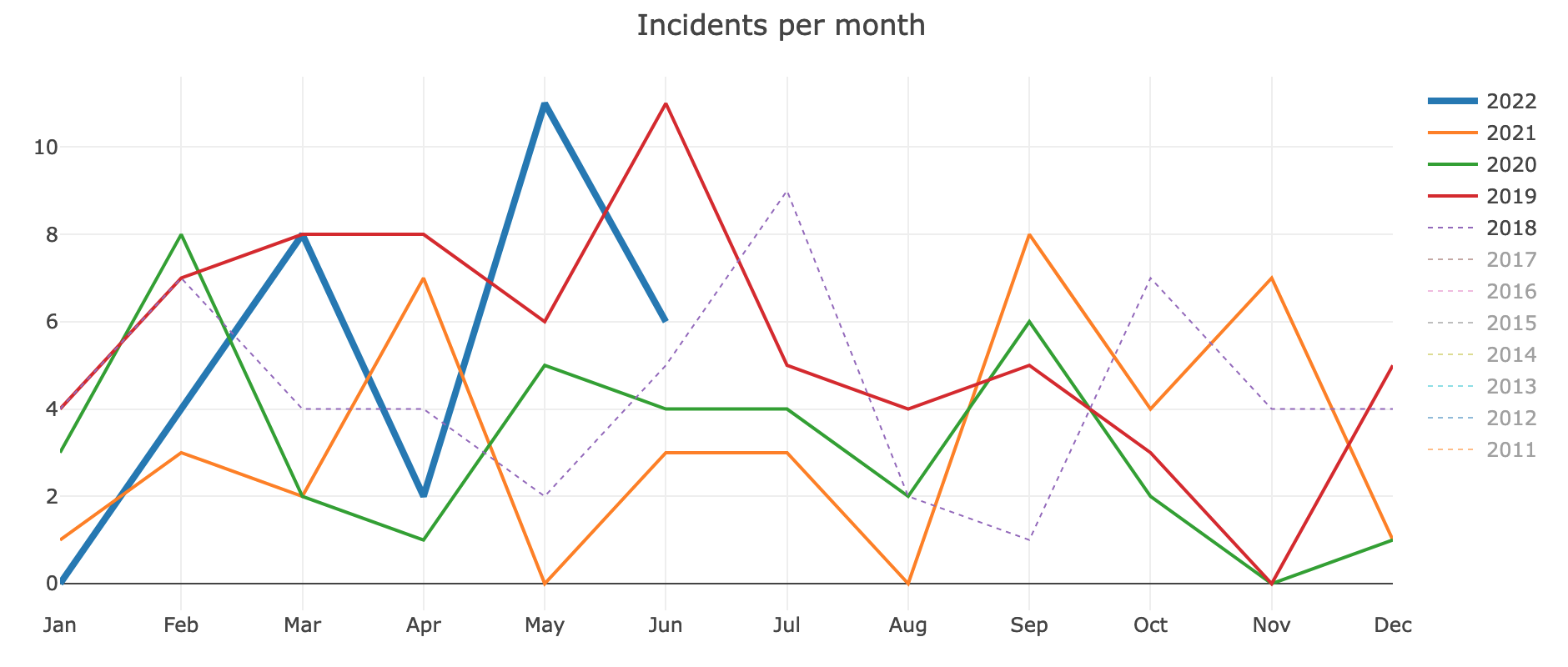

There were 6 incidents in June this year. That's double the median of three per month, over the past two years (Incident graphs).

2022-06-01 cloudelastic

Impact: For 41 days, Cloudelastic was missing search results about files from commons.wikimedia.org.

2022-06-10 overload varnish haproxy

Impact: For 3 minutes, wiki traffic was disrupted in multiple regions for cached and logged-in responses.

2022-06-12 appserver latency

Impact: For 30 minutes, wiki backends were intermittently slow or unresponsive, affecting a portion of logged-in requests and uncached page views.

2022-06-16 MariaDB password

Impact: For 2 hours, a current production database password was publicly known. Other measures ensured that no data could be compromised (e.g. firewalls and selective IP grants).

2022-06-21 asw-a2-codfw power

Impact: For 11 minutes, one of the Codfw server racks lost network connectivity. Among the affected servers was an LVS host. Another LVS host in Codfw automatically took over its load balancing responsibility for wiki traffic. During the transition, there was a brief increase in latency for regions served by Codfw (Mexico, and parts of US/Canada).

2022-06-30 asw-a4-codfw power

Impact: For 18 minutes, servers in the A4-codfw rack lost network connectivity. Little to no external impact.

Incident follow-up

Recently completed incident follow-up:

Audit database usage of GlobalBlocking extension

Filed by Amir (@Ladsgroup) in May following an outage due to db load from GlobalBlocking. Amir reduced the extensions' DB load by 10%, through avoiding checks for edit traffic from WMCS and Toolforge. And he implemented stats for monitoring GlobalBlocking DB queries going forward.

Reduce Lilypond shellouts from VisualEditor

Filed by Reuven (@RLazarus) and Kunal (@Legoktm) after a shellbox incident. Ed (@Esanders) and Sammy (@TheresNoTime) improved the Score extension's VisualEditor plugin to increase its debounce duration.

Remember to review and schedule Incident Follow-up work in Phabricator! These are preventive measures and tech debt mitigations written down after an incident is concluded. Read more about past incidents at Incident status on Wikitech.

Trends

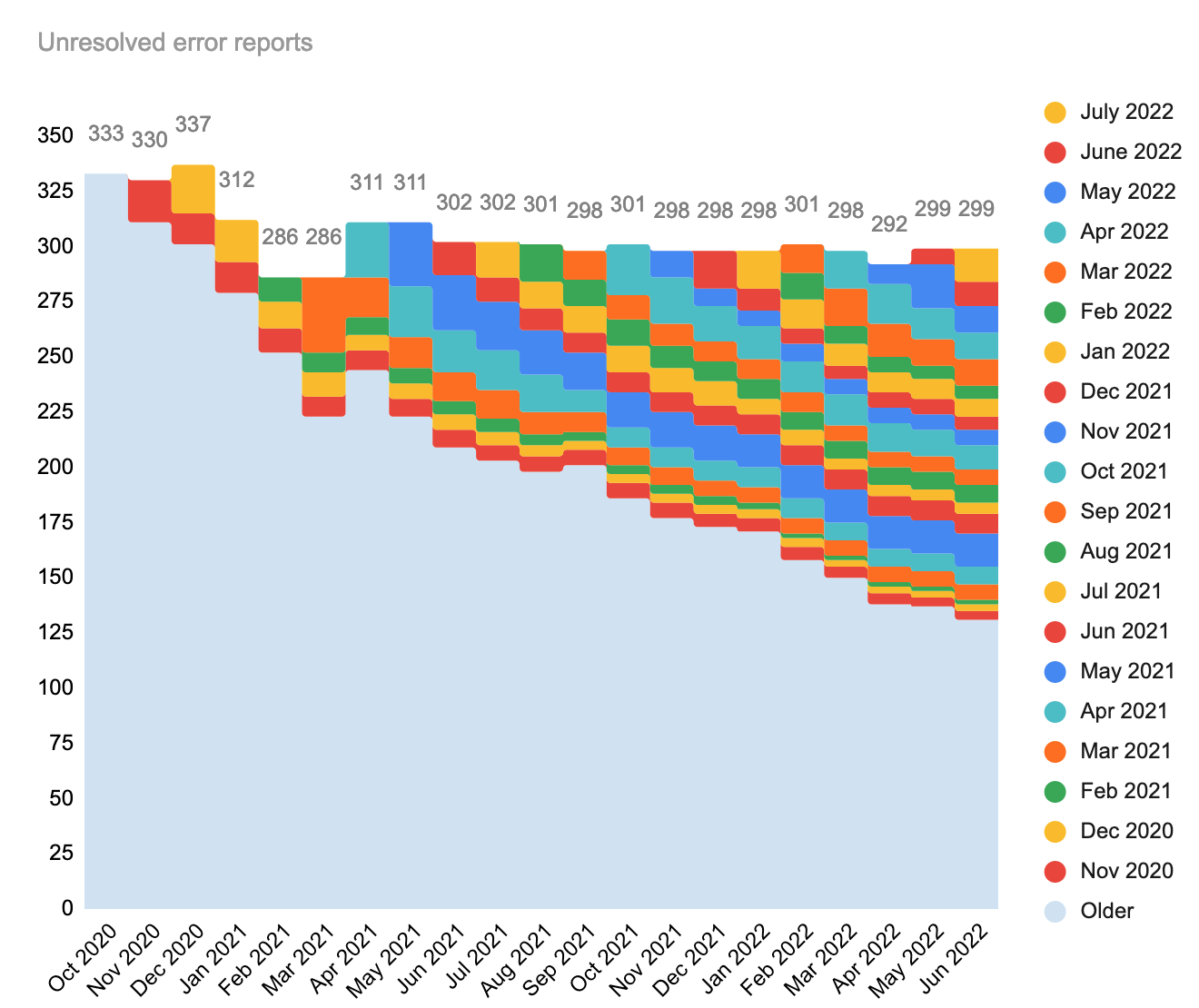

In June and July (which is almost over), we reported 27 new production errors and 25 production errors respectively. Of these 52 new issues, 27 were closed in weeks since then, and 25 remain unresolved and will carry over to August.

We also addressed 25 stagnant problems that we carried over from previous months, thus the workboard overall remains at exactly 299 unresolved production errors.

Take a look at the Wikimedia-production-error workboard and look for tasks that could use your help.

For the month-over-month numbers, refer to the spreadsheet data.

Thanks!

Thank you to everyone who helped by reporting, investigating, or resolving problems in Wikimedia production. Thanks!

Until next time,

– Timo Tijhof

"Mr. Vice President. No numbers, no bubbles."

— 🔴🟠🟡🟢🔵🟣

- Projects

- None

- Subscribers

- None

- Tokens