Last week there was a really interesting talk by Marja Hölttä at JSCOnfEu 2017: Parsing JavaScript - better lazy than eager

We should check how much time is spent parsing for Wikipedia on mobile! First let us go through a couple of extra interesting slides.

Wikipedia against the world

Marja had this slide comparing how much time is spent parsing on top site, and we can see that Wikipedia spend more time in parsing JS than maps.google.co.jp. Not sure though if that is really our mobile version or desktop. We should do our own benchmarking (we don't do that at all today). It would be really interesting to benchmark both mobile and desktop against other sites.

Parsing speed

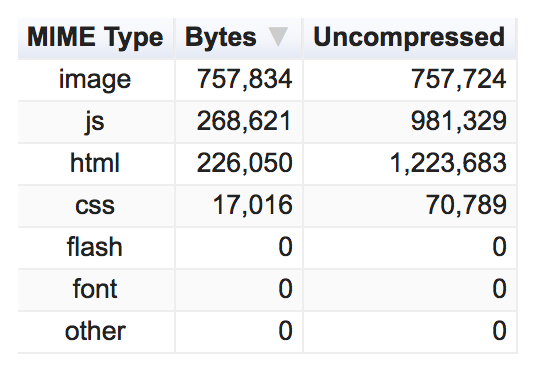

The amount of JavasScript that is shipped is interesting because the browser needs to spend time parsing. And remember, it is not the compressed size that matters, it is the uncompressed size.

Here we can compare how much JavaScript we ship vs single page web apps (however we don't need the JS to render in most cases).

Summary

Marja had a summary how to make things faster: "Ship less code".

Size on desktop

I've tested Obama on desktop and the amount of Javascript uncompressed is 1mb.

You can also try this in latest Chrome (59 and later) and get the amount of unused Javascript in devtools BUT it is broken and don't get all JavaScript we use, so please use WebPageTest or other tools to get the correct amount.

Size on mobile

I've also tested Obama on mobile and the amount of Javascript uncompressed is 0.6 mb that would then be 0.6 seconds just to parse it. More JavaScript shipped than the single page webapps.

Let us discuss this in our meeting in the performance team on Wednesday and see what can be our next steps.