The Airflow database stores lots of logs and information about dag_runs, tasks, sensors, SLA misses, etc.

This data will eventually fill Airflow's database enough that it becomes unusable.

We need to put some mechanism in place that removes unnecessary data from the DB, probably data older than a given number of months.

Here's an example of how we could do it:

https://cloud.google.com/composer/docs/cleanup-airflow-database

Description

Description

Related Objects

Related Objects

Event Timeline

Comment Actions

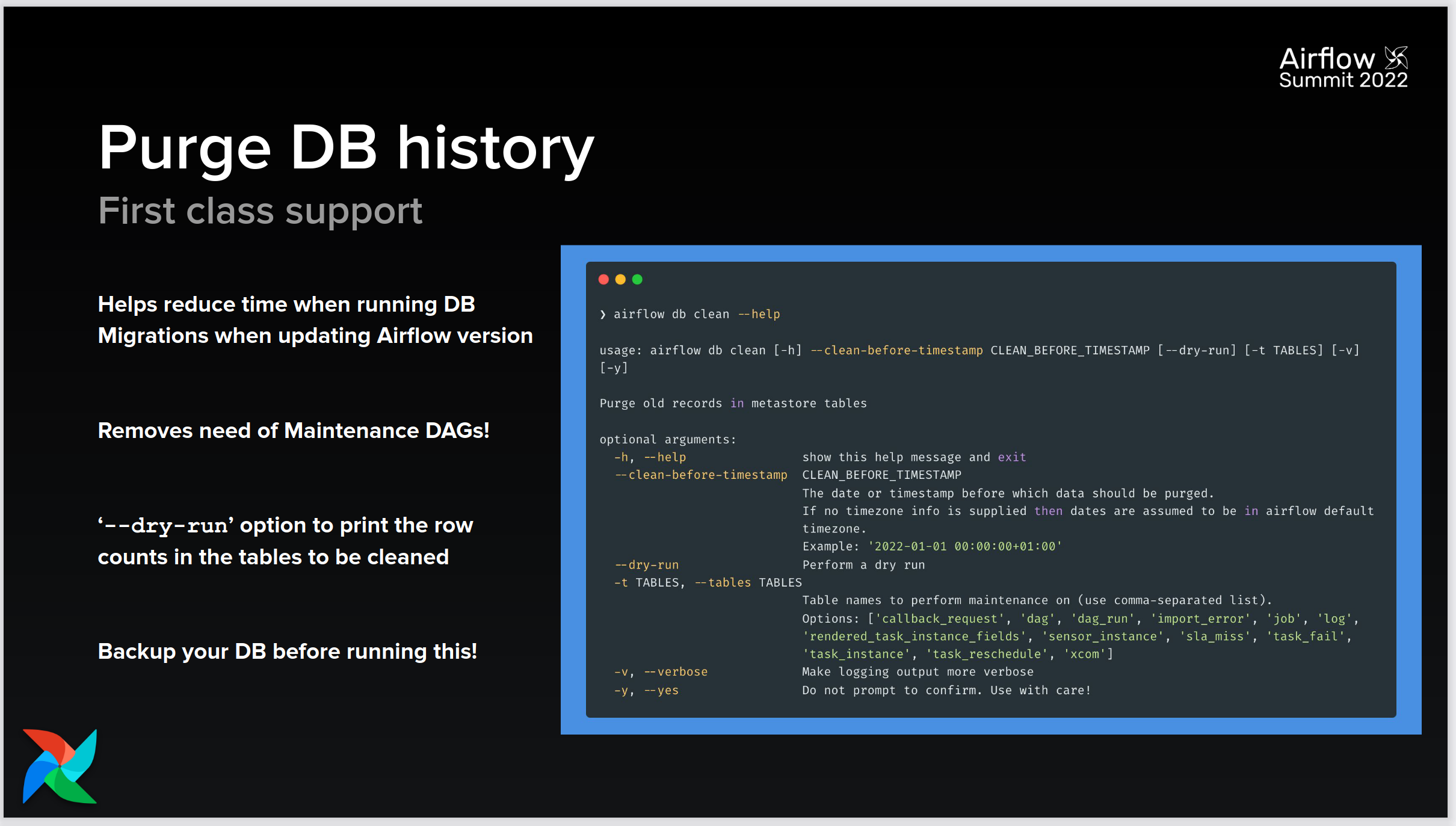

We believe that we will be able to use the new airflow db clean feature present in Airflow 2.3, once we have completed the upgrade to version 2.3.4. See T315580: Upgrade Puppet code to make Airflow configuration files compatible with version 2.5.0 and T309552: Update Airflow DAGs code to make it compatible with version V2.3.4 of Airflow

https://airflow.apache.org/docs/apache-airflow/2.3.0/release_notes.html#new-features

https://airflowsummit.org/slides/2022/l5-Airflow2_3-Kaxil.pdf