User Details

- User Since

- Jun 9 2015, 9:03 AM (463 w, 4 d)

- Availability

- Available

- IRC Nick

- dcausse

- LDAP User

- DCausse

- MediaWiki User

- DCausse (WMF) [ Global Accounts ]

Yesterday

tagging @serviceops for help regarding the connectivity issue and this new delayed connect error: 113 error

completion traffic is now served from codfw which has proper indices, lowering prio

This is still happening, raising to UBN

The errors "delayed connect error: 113" seem to have started on apr 24 21:30 right after deploying https://gerrit.wikimedia.org/r/c/operations/puppet/+/1023937.

The errors affect both mw@wikikube and mwmaint1002 https://logstash.wikimedia.org/goto/5ac680b477389129ffb5ddf33fa09940

I think we should switch completion traffic to codfw while we work on a more resilient version of this maint script and also understand why we get these errors.

Wed, Apr 24

Quick update that some fix has been deployed two weeks ago (T359580#9699108) to stop pushing these late events.

Tue, Apr 23

Fri, Apr 19

I think there are two issues to be discussed here. Defining qualitative requirements and how to repair inconsistencies.

Regarding qualitative requirements, for search and WDQS we don't have a good sense of what would be good enough. the only visible criteria we have at the moment is when users complain about stale data but without concrete measurement of the instability it is hard to define a number I guess. Could we do the other way around by starting to measure how consistent the streams are compared to the source of truth? Could this be done for some important streams like revision-create/page-delete/page-undelete/page-state by applying similar techniques than the one used in T215001#7523796? It is probable that missed events are rare in normal conditions but I still see huge spikes in the logs with many events failing to reach event-gate (T362977), could there be ways to improve the situation at a reasonable cost?

Tue, Apr 16

Mon, Apr 15

Tue, Apr 9

Mon, Apr 8

Reopening since it seems some of these hosts are still mentioned somewhere. The elastic settings check is complaining with CRITICAL - ['elastic2047.codfw.wmnet:9500', 'elastic2052.codfw.wmnet:9500', 'elastic2073.codfw.wmnet:9500', 'elastic2086.codfw.wmnet:9500', 'elastic2092.codfw.wmnet:9500', 'elastic2100.codfw.wmnet:9500', 'elastic2106.codfw.wmnet:9500'] does not match ['elastic2073.codfw.wmnet:9500', 'elastic2086.codfw.wmnet:9500', 'elastic2092.codfw.wmnet:9500', 'elastic2100.codfw.wmnet:9500', 'elastic2106.codfw.wmnet:9500']

Fri, Apr 5

Two scholia queries were rewritten:

- https://www.wikidata.org/wiki/Wikidata:SPARQL_query_service/WDQS_graph_split/Federated_Queries_Examples#Property_paths

- https://www.wikidata.org/wiki/Wikidata:SPARQL_query_service/WDQS_graph_split/Federated_Queries_Examples#Number_of_articles_with_CiTO-annotated_citations_by_year

The pages also contains some documentation about to approach such rewrites.

I'm boldly moving this ticket to our Needs Reporting (prior to be closed) column as I believe further explorations about how to rewrite scholia queries to support the split could perhaps be better handled in https://github.com/WDscholia/scholia.

Thu, Apr 4

Thanks! I'm not very familiar with alerts being set from grafana neither, I'll try to get more info on this, worst case we can always set up a new one directly in alertmanager just for the wdqs lag and sent to the search team using the same formula used by updateQueryServiceLag.php.

@Lucas_Werkmeister_WMDE thanks! Do you know where we could update this to include our alert email for such alerts?

According to @Urbanecm_WMF these queries are probably emitted while running https://github.com/wikimedia/mediawiki-extensions-GrowthExperiments/blob/master/maintenance/refreshLinkRecommendations.php.

Discussing possible fixes it would be ideal if cirrus could detect that it is being run via a maint script and possibly call something like disablePoolCountersAndLogging but perhaps without disabling statsd since the user script might require stats to be emitted.

Wed, Apr 3

Should be working properly now

Fri, Mar 29

won't be required after all

Thu, Mar 28

I restarted the updater on wdqs1013 and it's catching up, I have a note to check the status tomorrow and will repool it if necessary.

I could re-enable puppet on wdqs1013 and restart the updater to catchup on updates. But apparently this machine was repooled yesterday (as part of the wdqs scap deploy I suppose) and thus started to serve stale data without triggering any maxlag. It's when re-enabling puppet that I realized that this node was still pooled so I depooled it immediately but this caused a maxlag for several minutes.

Scap repooling machines might be something we might look into to avoid this kind of issues in the future.

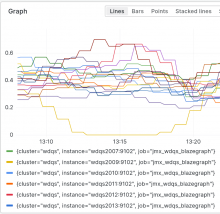

depooling the node we can see that the query rate actually going down to 0, request rate is generally very low on codfw so we might have to tune the threshold at around 0.2.

Mar 26 2024

The approach taken is:

- from nginx control a new header named 'x-monitoring-query' set to true if a list of criteria is met (currently using user-agent strings but could be extended to using source IPs as well I suppose)

- from blazegraph, do not log query with the header x-monitoring-query set

- adapt Wikidata.org to allow tuning the minimal query rate expected to be served from a pooled served (was hardcoded to 1.0)

- change the systemd timer that runs updateQueryServiceLag.php to set --pooled-server-min-query-rate to 0.5 (will need to double check that this value is sane and works well for codfw and eqiad servers)

Here are the UAs seen in hour of a depooled server:

+------------------------------------------------------------------+-----+ |UA |count| +------------------------------------------------------------------+-----+ |check_http/v2.3.3 (monitoring-plugins 2.3.3) |87 | |Twisted PageGetter |2146 | |prometheus-public-sparql-ep-check |1913 | |wmf-prometheus/prometheus-blazegraph-exporter (root@wikimedia.org)|120 | +------------------------------------------------------------------+-----+

Mitigation on wdqs1013:

- blazegraph stopped

- updater stopped with the /srv/wdqs/data_loaded flag removed

- puppet disabled

Mar 25 2024

Mar 21 2024

Mar 8 2024

Mar 7 2024

Discussed the issue today with @JAllemandou and the reason is that CirrusSearch in some circonstances might send these outdated events, we will fix the root cause (T359580) and in the meantime these alerts for this dataset can be ignored.

Mar 5 2024

changed the layout of the query a bit by moving the logistic function introduced in T271799 to the top-level so that it wraps the new nearmatch clause

Mar 4 2024

Compiled 10 real world examples at https://www.wikidata.org/wiki/Wikidata:SPARQL_query_service/WDQS_graph_split/Federated_Queries_Examples

final report available at https://wikitech.wikimedia.org/wiki/Wikidata_Query_Service/WDQS_Graph_Split_Impact_Analysis

@Physikerwelt thanks for your feedback.

The new builder moved the result to #4 which is better but still not enough and it's beaten by 3 other images because other criteria:

- weighted_tags:image.linked.from.wikipedia.lead_image/Q458

- statement_keywords:p180=q458

Mar 1 2024

Since the upgrade I believe that we are affected by https://github.com/ether/etherpad-lite/issues/5401. Wondering if a stale settings.json file got kept with padOptions.userName & userColor set to false instead of null.