From T344814: mw-on-k8s tls-proxy container CPU throttling at low average load we've learned that removing limits from the service mesh while setting a fixed envoy concurrency (of 12) will remove throttling (obviously) without causing run away CPU usage of envoy.

We do have other services potentially suffering from envoy throttling (T345243, T345244, T353460) where we might not want to remove limits altogether. According to research (https://wikitech.wikimedia.org/wiki/Kubernetes/Resource_requests_and_limits#envoy) istio improves this by generally setting envoy concurrency more suited to the actual CPU limit set for the container (as max(ceil(<cpu-limit-in-whole-cpus>), 2)).

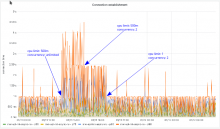

In T353460 we did a small test running envoy with 500m CPU limit with default concurrency (first spike) and with concurrency set to 2 (second spike):

While this paints a pretty clear picture in terms of throttling it seems to have also increased latency quite significantly (as in more than doubled it). Unfortunately there is a big spread in latency (depending on the type of request) at the upstream side as well, so it can't be said for sure hot big envoys role is from the current data.