After the base pipeline is ready T358699, we should create an Airflow DAG that formats and writes the monthly dump files to a public location.

This work includes writing SparkSql queries to format the data into the desired file shape.

The DAG should execute those queries, and then archive the results in its final place.

This DAG should also be monthly, and run right after the base pipeline finishes (a sensor should wait for the base datasets to be present for the month in question).

Initially, each base dataset should have its dump. And the contents of the dumps should be pretty much the same as the base dataset.

Maybe we don't need an extra DAG for this, maybe the dumps creation can be done in the original pipeline DAG?

Tasks:

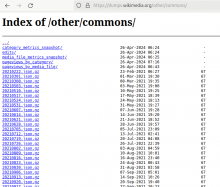

- Design the format of the dumps (tsv? compression? naming? location? etc)

- Write the queries that format the base Commons Impact Metrics datasets into the expected file shape.

- Write the Airflow DAG that waits for the base data to be present, executes the queries and archives the resulting files.

- Test in Airflow's dev instance

- Vet the generated files

- Code-review and deploy

- Make sure there are mechanisms in place to make the generated dump files public, rsync?

- Write the description page in dumps.wikimedia.org (readme)

Definition of done:

- The queries work properly and are in the corresponding repo (probably refinery?)

- The DAG is in production and running

- There's a fist version of the dumps in the chosen public location (probably the same as other dumps like pageview dumps)