Labvirt 1015 has now crashed twice. The first time it happened I rebooted it from mgmt and the console showed an endless stream of gibberish -- after a second reboot it came up again and appeared healthy but then died again a few days later.

Timeline

- 2017-07-24: Opened ticket to track crashes issue after 2 crashes

- 2017-08-14: "drained flea power" & cleared system log

- 2017-08-16: Crashed

- 2017-08-30: Crashed

- 2017-09-11: RMA for CPU replacement

- 2017-09-12: CPU in slot 2 replaced

- 2017-09-29: Moved 9 VMs to host

- 2017-10-01: Crashed

- 2017-10-18: Swapped CPU1 and CPU2

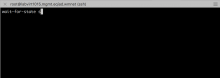

- 2017-10-20: Stress test, crashed

- 2017-10-23: RMA for CPU + mainboard replacement

- 2017-10-25: Mainboard declined; CPU approved

- 2017-11-03: CPU replaced

- 2017-11-03: Stress test; no crash

- 2017-11-05: Stress test; no crash

- 2017-11-07: Stress test; no crash

- 2017-11-08: Stress test; no crash