For the JSON content translation dumps, I'm running into an issue where there appear to be duplicate commas that prevent Python from loading the dumps as JSON objects. In my understanding, this is not a Python issue but incorrectly-formatted JSON. I have observed this issue in at least four dumps:

I'm running the following Python code:

import gzip

import json

with gzip.open('cx-corpora.en2fa.text.json.gz', 'rt') as fin:

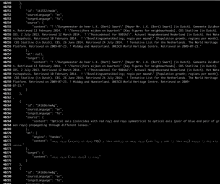

m = json.load(fin)What this looks if we use zless to scan the file and find the line where the error occurs (across lines 40261-40262 for the en2fa file):

If I remove line 40262, then the JSON loads correctly. The other dumps that I checked (and found the same error):

https://dumps.wikimedia.org/other/contenttranslation/20190301/cx-corpora.en2eo.text.json.gz

- json.decoder.JSONDecodeError: Expecting value: line 3482 column 1 (char 313148)

https://dumps.wikimedia.org/other/contenttranslation/20190301/cx-corpora.en2es.html.json.gz

- json.decoder.JSONDecodeError: Expecting value: line 88895 column 1 (char 52090103)

https://dumps.wikimedia.org/other/contenttranslation/20190301/cx-corpora.en2fa.text.json.gz

- json.decoder.JSONDecodeError: Expecting value: line 40262 column 1 (char 3413239)

https://dumps.wikimedia.org/other/contenttranslation/20190222/cx-corpora.en2es.text.json.gz

- json.decoder.JSONDecodeError: Expecting value: line 88895 column 1 (char 8166998)