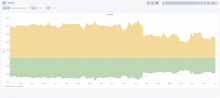

While doing T219123 amd moving from Q120k items read by clients to Q256 we noticed some increased load on the sql servers.

Se since rolled back from 256 back to 120.

HEre are some of the messages from operations on IRC at the time.

3:16 PM <Amir1> marostegui: is this because of your alter? https://grafana.wikimedia.org/d/000000273/mysql?orgId=1&var-dc=eqiad%20prometheus%2Fops&var-server=db1126&var-port=9104&from=now-3h&to=now 3:16 PM <Amir1> or should I scream 3:17 PM <marostegui> definitely not 3:17 PM <marostegui> my alter is on db1087 3:17 PM <marostegui> something is going on there 3:18 PM <marostegui> Lots of SELECT /* Wikibase\Lib\Store\Sql\Terms\PrefetchingItemTermLookup::prefetchTerms */ wbit_item_id,wb 3:18 PM <jynus> could we reduce weight from db1126 3:19 PM <marostegui> db1126 is the loadest (500) 3:19 PM <jynus> yes 3:19 PM <jynus> that is why I say to reduce it 3:19 PM <Amir1> most of them should get handled by the cache 3:19 PM <jynus> it has close to 30K QPS 3:19 PM <jynus> and db1087 is depooled 3:19 PM <marostegui> db1104 is also having an increase: https://grafana.wikimedia.org/d/000000273/mysql?orgId=1&from=now-12h&to=now&var-dc=eqiad%20prometheus%2Fops&var-server=db1104&var-port=9104 3:20 PM <jynus> it is peaking at 1200 ongoing connections 3:20 PM <marostegui> maybe we can decrease db1126 to 400 and increase db1101 from 50 to 100 on main 3:20 PM <marostegui> let me do that 3:20 PM <jynus> just reduce the load on db1126 3:20 PM <jynus> shift it to the other servers 3:21 PM <jynus> it will give us some time 3:22 PM <Amir1> we are only at Q256K items so far 3:22 PM <Amir1> should I stop going further? I think so 3:22 PM <marostegui> Amir1: yep 3:22 PM <jynus> load went ok 3:22 PM <jynus> for now 3:22 PM <marostegui> Amir1: Remember our conversation about how hard was to measure how much a host can handle? hehe 3:22 PM <marostegui> Amir1: we got the first warning! 3:22 PM <jynus> 400 connections, much less 3:23 PM <jynus> inserts and updates spiked 3:23 PM <Amir1> :( Can I look at something to see what I can improve? 3:23 PM <Amir1> tendril? 3:23 PM <jynus> latency increased 5x 3:24 PM <jynus> https://grafana.wikimedia.org/d/000000273/mysql?orgId=1&var-dc=eqiad%20prometheus%2Fops&var-server=db1126&var-port=9104&fullscreen&panelId=40&from=1582547044507&to=1582557844508 3:24 PM <marostegui> Amir1: I was checking the queries, so far I haven't found anything terribly wrong 3:26 PM <jynus> cpu on db1126 spiked at 88%, something that rarely happens on mysql 3:26 PM ⇐ Cohaf quit (uid344643@wikimedia/Cohaf) 3:26 PM <jynus> load 50 on a 16 thread cpu 3:27 PM <jynus> it got automatically depooled at 15:13 I think 3:27 PM <jynus> for some time 3:28 PM <Amir1> should I scale back? 3:28 PM <jynus> things are ok now 3:28 PM <jynus> but we should keep monitoring 3:29 PM <addshore> lets monitor closely, and investigate, and maybe scale back in a bit 3:29 PM <marostegui> Amir1: I am seeing a huge query, don't know if it is normal 3:29 PM <marostegui> Amir1: let me send it to you 3:30 PM <marostegui> done 3:30 PM <jynus> in particular https://grafana.wikimedia.org/d/000000278/mysql-aggregated?orgId=1&var-dc=eqiad%20prometheus%2Fops&var-group=core&var-shard=s8&var-role=slave&fullscreen&panelId=11&from=1582536615469&to=1582558215469 3:30 PM <moritzm> !log updated component/jdk8 to 8u242-b08-1~deb10u1 (forward port of latest Java 8 security update) 3:30 PM <+stashbot> Logged the message at https://wikitech.wikimedia.org/wiki/Server_Admin_Log 3:30 PM <addshore> marostegui: can i have a copy too? :) 3:30 PM <marostegui> addshore: sorry!, of course 3:31 PM <+icinga-wm> PROBLEM - Check systemd state on idp1001 is CRITICAL: CRITICAL - degraded: The system is operational but one or more units failed. https://wikitech.wikimedia.org/wiki/Monitoring/check_systemd_state 3:31 PM <Amir1> technically it's just a large batch, addshore I think we should split large group of entities to smaller batch sizes. What do you think? 3:32 PM <addshore> sounds like it might be needed 3:32 PM <addshore> but also we should look at it a bit closer before jumping to that conclusion :) 3:32 PM <jynus> marostegui: I am checking https://icinga.wikimedia.org/cgi-bin/icinga/status.cgi?search_string=MariaDB+read+only+s8 and I am thinking, if the current state continues, we may want to rebalance the weights a bit 3:33 PM <marostegui> jynus: yeah, let's try to leave the dust settle for now 3:33 PM → Hamishcn joined (~Hamishcn@58.20.79.171) 3:33 PM <jynus> sure, just thinking mid-term 3:33 PM <marostegui> there's not much more room for changes there though 3:34 PM <marostegui> we can increase weight for the multiinstance a bit, after all they all now have the same schemas! 3:34 PM <jbond42> ^idp1001 is me 3:34 PM <jynus> marostegui: indeed 3:34 PM <jynus> marostegui: we could even depool those from other sections in an emergency and give them close-to-full weights 3:34 PM <marostegui> yeah 3:34 PM <marostegui> that too 3:35 PM <jynus> lets wait for now 3:35 PM <Amir1> something needs terms of 9000 different entities, that's weird 3:35 PM <addshore> Amir1: sounds like the sort of thing we could log? 3:35 PM <jynus> Amir1: that looks like cpu increase to me 3:35 PM <jynus> as in, a good candidate for the bump 3:38 PM <+icinga-wm> PROBLEM - Prometheus jobs reduced availability on icinga1001 is CRITICAL: job=idp site=eqiad https://wikitech.wikimedia.org/wiki/Prometheus%23Prometheus_job_unavailable https://grafana.wikimedia.org/d/NEJu05xZz/prometheus-targets 3:38 PM <jynus> db1126 latency went down again, but I would keep it in the current wait for some time 3:38 PM halAFK → halfak 3:38 PM <marostegui> yeah, let's not touch it for now 3:39 PM <Amir1> DBPerformance channel would log it 3:39 PM <addshore> Amir1: does that have stacktraces? 3:40 PM <Amir1> yup, this one seems unrelated: https://logstash.wikimedia.org/app/kibana#/doc/logstash-*/logstash-mediawiki-2020.02.24/mediawiki?id=AXB32jwDh3Uj6x1zH7RT&_g=h@44136fa 3:40 PM <jynus> in fact, it went a bit up again 3:40 PM <Amir1> but an example 3:41 PM <addshore> Amir1: yay stacktraces, lets do some digging in mattermost? 3:51 PM <jynus> Amir1, addshore: wikidata latency p50 seems controlled, but p99 seems high (aka no outage but something to check performance-wise) https://grafana.wikimedia.org/d/000000273/mysql?orgId=1&var-dc=eqiad%20prometheus%2Fops&var-server=db1126&var-port=9104&fullscreen&panelId=40&from=1582548658209&to=1582559458209 3:52 PM <Amir1> I think we should scale back for now 3:52 PM <Amir1> addshore: what do you think? 3:52 PM <Amir1> just one step, from Q256 -> Q120 3:52 PM <James_F> Is it getting better? 3:52 PM → wdoran_ joined (sid357666@gateway/web/irccloud.com/x-lhdmgruhenxfbwco) 3:52 PM <addshore> Amir1: yes lets half it 3:52 PM <addshore> while we investigate further 3:54 PM <+wikibugs> (CR) Ladsgroup: [C: +2] Revert "Increase the reads for term store for clients for up to Q256K" [mediawiki-config] - https://gerrit.wikimedia.org/r/574498 (owner: Ladsgroup) 3:54 PM ↔ wdoran_ nipped out 3:55 PM <+wikibugs> (Merged) jenkins-bot: Revert "Increase the reads for term store for clients for up to Q256K" [mediawiki-config] - https://gerrit.wikimedia.org/r/574498 (owner: Ladsgroup) 3:57 PM <+logmsgbot> !log ladsgroup@deploy1001 Synchronized wmf-config/InitialiseSettings.php: Revert: [[gerrit:574454|Increase the reads for term store for clients for up to Q256K (T219123)]] (duration: 00m 56s)

During investigation we notices some large queries coming from the description api module:

Query returned 15034 row(s):

query-m: SELECT wbit_item_id,wbit_term_in_lang_id FROM `wbt_item_terms` WHERE wbit_item_id IN (N,...,N) [TRX#99c69e]

#0 /srv/mediawiki/php-1.35.0-wmf.20/includes/libs/rdbms/database/Database.php(1344): Wikimedia\Rdbms\TransactionProfiler->recordQueryCompletion(Object(Wikimedia\Rdbms\GeneralizedSql), 1582558754.9982, false, 15034)

#1 /srv/mediawiki/php-1.35.0-wmf.20/includes/libs/rdbms/database/Database.php(1226): Wikimedia\Rdbms\Database->executeQueryAttempt('SELECT wbit_it...', 'SELECT /* Wikib...', false, 'Wikibase\\Lib\\St...', 0)

#2 /srv/mediawiki/php-1.35.0-wmf.20/includes/libs/rdbms/database/Database.php(1162): Wikimedia\Rdbms\Database->executeQuery('SELECT wbit_it...', 'Wikibase\\Lib\\St...', 0)

#3 /srv/mediawiki/php-1.35.0-wmf.20/includes/libs/rdbms/database/Database.php(1832): Wikimedia\Rdbms\Database->query('SELECT wbit_it...', 'Wikibase\\Lib\\St...')

#4 /srv/mediawiki/php-1.35.0-wmf.20/extensions/Wikibase/lib/includes/Store/Sql/Terms/PrefetchingItemTermLookup.php(107): Wikimedia\Rdbms\Database->select('wbt_item_terms', Array, Array, 'Wikibase\\Lib\\St...')

#5 /srv/mediawiki/php-1.35.0-wmf.20/extensions/Wikibase/lib/includes/Store/Sql/Terms/TermStoresDelegatingPrefetchingItemTermLookup.php(72): Wikibase\Lib\Store\Sql\Terms\PrefetchingItemTermLookup->prefetchTerms(Array, Array, Array)

#6 /srv/mediawiki/php-1.35.0-wmf.20/extensions/Wikibase/data-access/src/ByTypeDispatchingPrefetchingTermLookup.php(52): Wikibase\Lib\Store\Sql\Terms\TermStoresDelegatingPrefetchingItemTermLookup->prefetchTerms(Array, Array, Array)

#7 /srv/mediawiki/php-1.35.0-wmf.20/extensions/Wikibase/data-access/src/ByTypeDispatchingPrefetchingTermLookup.php(52): Wikibase\DataAccess\ByTypeDispatchingPrefetchingTermLookup->prefetchTerms(Array, Array, Array)

#8 /srv/mediawiki/php-1.35.0-wmf.20/extensions/Wikibase/client/includes/Store/DescriptionLookup.php(143): Wikibase\DataAccess\ByTypeDispatchingPrefetchingTermLookup->prefetchTerms(Array, Array, Array)

#9 /srv/mediawiki/php-1.35.0-wmf.20/extensions/Wikibase/client/includes/Store/DescriptionLookup.php(73): Wikibase\Client\Store\DescriptionLookup->getCentralDescriptions(Array)

#10 /srv/mediawiki/php-1.35.0-wmf.20/extensions/Wikibase/client/includes/Api/Description.php(72): Wikibase\Client\Store\DescriptionLookup->getDescriptions(Array, Array, Array)

#11 /srv/mediawiki/php-1.35.0-wmf.20/includes/api/ApiQuery.php(255): Wikibase\Client\Api\Description->execute()

#12 /srv/mediawiki/php-1.35.0-wmf.20/includes/api/ApiMain.php(1586): ApiQuery->execute()

#13 /srv/mediawiki/php-1.35.0-wmf.20/includes/api/ApiMain.php(522): ApiMain->executeAction()

#14 /srv/mediawiki/php-1.35.0-wmf.20/includes/api/ApiMain.php(493): ApiMain->executeActionWithErrorHandling()

#15 /srv/mediawiki/php-1.35.0-wmf.20/api.php(84): ApiMain->execute()

#16 /srv/mediawiki/w/api.php(3): require('/srv/mediawiki/...')

#17 {main}This query should not be selecting 15k rows... when it only returns 50 descriptions in the result