@Protsack.stephan I found one nearly monotonic column in the dumps: .event.date_created. But I'm still having trouble understanding the full pipeline—these event objects have type: update but the dates span roughly 14 months. What stream is this part of the data coming from? Maybe this is a bucket of titles, and the update events come from a job which refreshes the titles in order to capture renaming and new articles?

- Queries

- All Stories

- Search

- Advanced Search

- Transactions

- Transaction Logs

Advanced Search

Today

Yesterday

@Lina_Farid_WMDE Thanks for the error report! For the record, unfortunately, it seems that you'll need to request the "analytics-privatedata-users (no Kerberos, no ssh)" access group.

Tue, Apr 30

(Let's keep this task and solve the root issue after the production error is worked-around.)

Mon, Apr 29

Fri, Apr 26

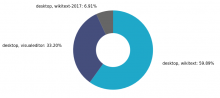

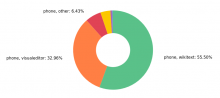

FWIW, I've been trying to add a simple line chart of editor interface popularity over time, but it's been unironically timing out.

Thu, Apr 25

Devs: it would be nice to get a second opinion on these SQL queries, especially some sanity checking of the way users and sessions are grouped.

Hypothesis 4 can be followed up in T363453: Question HTML dump page order.

Wed, Apr 24

Tue, Apr 23

Sneak preview for those playing at home:

When the metrics land, they should appear on https://prometheus-eqiad.wikimedia.org/analytics/targets?search=wmde_tewu .

Mon, Apr 22

This will simplify how we share monitoring duty during the long-running scrape job.

Stalled waiting for WMF legal review.

Well, it could be simple after all. Articles at the end are on average twice as long (by HTML length).

In this example, the segment on the left is processing the tail articles starting at the 2.6M'th row, and on the right we're processing the first articles in the dump.

Very surprisingly to me, Hypothesis 4 seems to be the only validated theory. I haven't yet identified what makes the last articles harder to process, but the performance characteristics are almost perfectly repeatable when going back and forth between sets of articles at the beginning vs. the end of the dump. Initial articles can be processed at ~1.5k articles/s, and final articles at ~250 articles/s.

Fri, Apr 19

WIP on the low-level-concurrency branch will let us experiment with per-page timeouts and debugging.

Thu, Apr 18

This may be related to T362894: Data quality: HTML dumps contain unexplainably outdated revisions of some pages. The duplicates seem to have various revision ids, here's a set showing that the article is included three times with the same title and page id, but at different versions:

tar xzf dewiki-NS0-20240201-ENTERPRISE-HTML.json.tar.gz -O | jq 'select(.name == "10.000 B.C.") | .identifier,.version.identifier'

@BTullis Thanks for highlighting this possibility! I tried the Conda environment as you suggested and it works perfectly for our needs. Even at high concurrency, the performance seems to be the same as in the bare metal environment I had cobbled together previously.

Wed, Apr 17

Still seeing extreme swings in performance, following the same shape as before. Now with additional metrics:

Tue, Apr 16

Pulling this in because it would be nice to have, to debug the slowdown we see after the first 20 minutes or so.

Some of these packages already appeary in debmonitor:

Hmm, spot-checking is only turning up articles which were created or moved after the snapshot date.